Non classé

What Tesla Reveals About Vertical Integration in Supply Chains

Published

2 heures agoon

By

Tesla is not a template for every manufacturer. But it is one of the clearest examples of what happens when a company decides that certain supply chain capabilities are too important to leave outside the enterprise boundary.

Tesla is useful to study because it puts a hard question in front of manufacturers. What should remain external in the supply chain, and what has become too strategic, too fragile, or too tightly linked to product performance to outsource comfortably?

For years, the dominant logic favored broader supplier networks, lower fixed-cost exposure, and leaner balance sheets. That model worked reasonably well in a period shaped by labor arbitrage, supplier specialization, and relatively stable global trade flows. But the last several years have exposed the weaknesses in that model. Shortages, logistics disruption, geopolitical instability, tariff volatility, and competition around key technologies have all made external dependency look less benign.

Tesla sits near the center of that shift. Its operating model spans vehicle design and manufacturing, software, power electronics, direct sales, service operations, a global charging network, and deeper moves into batteries and upstream materials. Tesla’s 2025 annual report describes manufacturing operations across North America, Europe, and Asia, a global Supercharger footprint, and an in-house lithium refinery in Texas that began operations in January 2026. The company also states that it supplements supplier battery cells with its own manufacturing efforts rather than attempting to replace supplier capacity outright. (ir.tesla.com)

That is why Tesla matters. It is not vertically integrated in some clean textbook sense. It is integrated around selected control points.

Vertical Integration Is Really a Question of Control

In supply chain discussions, vertical integration is often reduced to ownership. That is too narrow. The more important issue is control over the capabilities that most directly shape cost, quality, speed, resilience, and product differentiation.

Tesla’s model makes that point clearly. Its battery strategy is not just a sourcing matter. It is also a product issue, a manufacturing issue, a cost issue, and a growth issue. Its software architecture is not just an engineering decision. It affects vehicle functionality, serviceability, update cadence, and the speed at which changes can be deployed. Its charging network is not simply downstream infrastructure. It is part of the operating environment around the product. (ir.tesla.com)

That is the first lesson. Vertical integration is not a philosophy. It is a decision about where control matters most.

Why the Model Has Strategic Advantages

The strongest argument for vertical integration is that it can compress coordination.

When design, software, manufacturing engineering, selected core components, and downstream infrastructure sit closer together, the enterprise can usually move faster. Product changes can be tested against manufacturing realities more quickly. Service feedback can flow back into engineering with less friction. Upstream constraints can be treated as strategic issues rather than procurement problems.

Tesla’s structure also creates tighter alignment across domains that many manufacturers still manage too separately. Batteries, factories, software, charging infrastructure, and sales channels are not treated as loosely connected functions. They operate more as parts of one system. That matters in industries where technical interdependence is high and delays in one area quickly show up elsewhere. (ir.tesla.com)

There is also a resilience argument. Tesla’s filings make clear that battery cells and raw materials remain critical dependencies and that availability, pricing, and trade conditions can affect both cost and growth. Moving deeper into lithium refining and in-house cell manufacturing is not just an innovation story. It is a supply chain design response to strategic dependence. (ir.tesla.com)

That is where the model becomes relevant to a broader set of manufacturers. If a capability is central to product economics and repeatedly exposed to external risk, the argument for pulling more of it inward gets stronger.

The Cost Side Is Just as Real

Tesla also shows the part of vertical integration that is easier to admire than to execute.

The broader the enterprise boundary, the more complexity management has to absorb. A company that pulls more activities inside gains more control, but it also takes on more operational burden. Factory ramping, service operations, software deployment, infrastructure buildout, supplier coordination, upstream material strategy, and global logistics all become management problems inside the same system.

That complexity has real cost. Tesla’s 2025 capital expenditures were about $8.5 billion. Reuters reported in January that Tesla planned to increase capital spending further in 2026 as it expanded investment in AI, robotics, vehicles, and manufacturing capacity. (sec.gov)

The point is not simply that Tesla spends heavily. It is that vertical integration usually requires more capital, more coordination, and more sustained execution discipline. It removes some external dependencies, but it creates another kind of risk: greater reliance on the company’s own ability to execute across multiple complex systems at the same time.

That is the tradeoff. Vertical integration can improve resilience, but it can also expose weak process discipline more quickly. It can create speed, but it can also create internal bottlenecks if the organization is not ready for the operating load.

Tesla Is Not a Template

This is where the broader lesson matters.

Tesla is not proof that every manufacturer should pull more operations in-house. Most should not. For many companies, broad outsourcing and specialization will continue to make economic sense.

The more useful conclusion is narrower. Companies need to identify the specific capabilities that most directly affect resilience, speed, economics, and differentiation, then decide whether those capabilities are still safe to leave outside the firm.

For Tesla, batteries, software, direct customer control, charging infrastructure, and selected upstream materials clearly sit near that threshold. For another manufacturer, the answer may be different. It may be semiconductor design, after-sales parts availability, automation software, supplier tooling strategy, or tighter control over demand signals.

The important shift is that the old outsource-by-default model is less persuasive in sectors where product complexity is high, supply risk is recurring, and speed of iteration matters competitively.

What Supply Chain Executives Should Take From It

The most useful lesson from Tesla is not about imitation. It is about supply chain architecture.

Executives should ask three direct questions.

First, which capabilities most directly shape our ability to serve the market profitably and reliably?

Second, where has external dependency become a strategic liability rather than a cost advantage?

Third, do we have the operating discipline to take more control without simply creating internal bottlenecks?

Those are the right questions because the answer is rarely binary. The issue is not whether to outsource or insource everything. The issue is whether there are a few control points that now deserve deeper ownership, tighter integration, or stronger commercial control.

Tesla shows that vertical integration still has real strategic value. But it only works when it is selective and executed well. In that sense, the company’s relevance to supply chain leaders is not ideological. It is practical.

The companies that will get this right will not be the ones that try to own everything. They will be the ones that know which parts of the system they cannot afford to leave to chance.

The post What Tesla Reveals About Vertical Integration in Supply Chains appeared first on Logistics Viewpoints.

You may like

Non classé

What the Latest IEA Update Says About Energy Risk, Supply Chains, and Industrial Strategy

Published

3 heures agoon

20 avril 2026By

The latest update from the International Energy Agency arrives in a more fragile setting than it did even a few days ago. Uncertainty around U.S.-Iran talks has increased, the cease-fire window is narrowing, and tensions around the Strait of Hormuz have intensified.

That backdrop matters. The IEA’s update is not just a market summary. It is a clear read on how energy security, supply chain concentration, industrial policy, and AI infrastructure are converging.

Energy risk no longer sits apart from logistics, sourcing, or technology strategy. Oil flows through Hormuz, rare earth concentration, data center electricity demand, and government intervention now belong to the same operating picture.

For supply chain leaders, that is the point.

Oil disruption is moving into operations

The IEA highlights disrupted flows, lower supply, and weaker shipping activity through the Strait of Hormuz.

But the more important issue is what follows. When disruption persists, it moves beyond pricing and into operations. Petrochemical producers cut output as feedstock tightens. Aviation activity falls. Industrial users adjust production and purchasing.

At the same time, the diplomatic path remains unclear. That uncertainty keeps disruption risk in the system.

For logistics-intensive sectors, the implications are direct. Transport and input cost volatility can rise together. Demand becomes harder to interpret. And physical chokepoints still matter more than many network strategies assume.

Geography still shapes risk.

Energy security is now economic security

The IEA also points to engagement with institutions such as the IMF and World Bank. That signals a broader shift.

Energy volatility is now being treated as an economic management issue. It is tied to inflation, industrial continuity, affordability, and trade exposure.

For supply chain leaders, this is familiar territory. Fuel, freight, sourcing, and policy exposure are no longer separate categories. They are part of the same risk structure.

The uncertainty around negotiations reinforces that point. This is not a market waiting for a clean reset. It is a market pricing continued instability.

Rare earths remain a concentration problem

The IEA’s focus on rare earth supply chains is one of the stronger sections.

These materials underpin electric vehicles, robotics, advanced manufacturing, and AI hardware. Yet supply remains highly concentrated across mining, processing, and refining.

That creates a different class of risk. Not just pricing, but access and timing.

Diversification is slow. That is the constraint.

For companies investing in automation and electrification, resilience depends on understanding where concentration actually sits—not just at Tier 1, but upstream.

AI is becoming an infrastructure issue

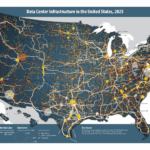

The IEA also highlights the growing link between energy and AI.

Electricity demand from data centers is rising quickly, with AI workloads accelerating that trend. More importantly, deployment is starting to run into physical limits: power availability, grid capacity, cooling, and siting.

That shifts the discussion.

AI is no longer just software. It is an infrastructure load. It can improve planning and execution, but it also adds pressure to constrained energy systems.

Digital strategy now has a physical footprint.

Governments are taking a larger role

The IEA’s policy tracking reinforces another shift. Governments are becoming more active across energy and adjacent industrial systems.

Policy activity and spending have increased, with a focus on energy security, affordability, resilience, critical minerals, and technology supply chains.

This is not temporary. It reflects a more strategic, interventionist posture.

Markets still matter. But they are increasingly shaped by policy, public investment, and security considerations. That raises the importance of policy awareness in sourcing, siting, and capital allocation.

Recent events around shipping and negotiations underscore that reality. Energy markets are being shaped as much by state action as by supply and demand.

The LV takeaway

The IEA update is best read as a system signal, not a set of headlines.

Oil disruption affects transportation and production.

Critical minerals concentration affects industrial scaling.

AI growth affects power demand and infrastructure.

Policy responses affect cost structures and competitive position.

These are now tightly linked.

For supply chain leaders, that means resilience planning has to widen. Monitoring freight and supplier performance alone is no longer sufficient. Energy exposure, material concentration, infrastructure limits, and policy direction all need to be part of the same view.

That was already true. The current uncertainty just removes any remaining doubt.

The post What the Latest IEA Update Says About Energy Risk, Supply Chains, and Industrial Strategy appeared first on Logistics Viewpoints.

Non classé

Cloudflare’s Code Mode Signals a Better Architecture for Enterprise AI Agents

Published

3 heures agoon

20 avril 2026By

Cloudflare’s new Code Mode MCP server is getting attention for its token savings. The more important point is what it suggests about agent architecture. As enterprise AI moves from demos into real operating environments, the challenge is becoming less about whether a model can call a tool and more about whether it can work across large, complex systems without becoming slow, expensive, or brittle.

Cloudflare’s launch of a Code Mode MCP server matters for a reason that goes well beyond developer productivity.

It addresses a real scaling problem in enterprise AI.

Much of the early conversation around AI agents focused on model capability. Could a model answer questions, write code, summarize documents, or call tools? Those were useful milestones. But as organizations move from experimentation into deployment, a different constraint is coming into view. The issue is not only what the model can do. It is how the agent operates inside a large, messy, real-world system.

That is where Cloudflare’s announcement becomes relevant.

The company introduced a new approach to its Model Context Protocol server that sharply reduces the context burden involved in working across a very large API surface. Instead of exposing thousands of endpoints as separate tools, Cloudflare’s Code Mode reduces the interaction layer to discovery and execution. The agent can search for the capabilities it needs, generate a small execution plan in code, and run that plan inside a controlled runtime.

The token savings are the headline. The broader significance is architectural.

The problem with large tool surfaces

Many AI agent demonstrations still happen in simplified settings. The agent has a narrow task, a manageable set of tools, and a controlled workflow. In those conditions, standard tool calling works well enough. The model sees a list of available actions, selects one, gets a result, and decides what to do next.

That model becomes less efficient as the environment grows.

In enterprise settings, an agent may need to work across hundreds or thousands of possible actions. Each tool definition consumes context. Each new capability adds complexity. The model ends up spending more of its limited budget understanding what it can do and less of it reasoning through what it should do.

As that burden rises, so do the practical problems. Cost goes up. Latency goes up. Reliability can start to fall. The system becomes harder to govern and harder to scale.

This is not just a model problem. It is an orchestration problem.

What Cloudflare changed

Cloudflare’s design changes the unit of interaction.

Rather than presenting the model with a massive menu of callable tools, Code Mode uses a much thinner interface built around discovery and execution. The agent first searches the available API surface to identify the small set of capabilities relevant to the task. It does not need the full platform definition loaded into context up front. It narrows its focus to the services, endpoints, and functions tied to the job at hand.

Once it identifies those relevant capabilities, the model writes a short piece of JavaScript using a type-aware software development kit. That matters because the SDK already understands the structure of the API. It knows what objects exist, what parameters are expected, and how requests should be formed. So the model is not improvising raw API calls from scratch. It is writing against a structured interface that reduces ambiguity and keeps execution aligned with the platform’s rules.

That code is then executed inside a secure V8 isolate. In practical terms, that means the execution happens in a tightly sandboxed runtime. The code can perform the approved actions, but it does not get broad access to the broader system environment. There is no normal file system, no unrestricted access to secrets or environment variables, and outbound actions can be tightly controlled.

The result is a different operating model for the agent. It first figures out what capabilities matter, then writes a compact execution plan, and then runs that plan inside a bounded sandbox.

That is a more scalable interaction pattern than forcing the model to navigate thousands of tools one step at a time.

Why this matters beyond Cloudflare

It would be easy to read this as a narrow infrastructure story. That would miss the broader point.

Cloudflare is addressing a constraint that many enterprise AI systems are likely to encounter. As soon as agents move beyond simple assistance tasks and into operational workflows, the action space expands quickly. More systems. More APIs. More conditional logic. More chained decisions.

At that point, raw model capability is no longer enough. The surrounding architecture starts to matter just as much.

That is why this launch deserves attention from enterprise software providers and business operators alike. It points to a more scalable model for how agents may interact with large platforms. Instead of exposing everything directly and forcing the model to work through an enormous tool catalog, the system can give the model a thinner abstraction layer and let it compose work more efficiently.

That may prove to be a more durable pattern for enterprise deployment.

The bottleneck is shifting from intelligence to execution

For the past two years, most of the AI market has focused on model performance. That made sense. Better models unlocked more useful outputs.

But production environments expose a different set of constraints.

The harder questions now are operational. How much context does an agent consume just to understand the available actions? How many steps does it take to complete a multi-part task? How much latency does the orchestration layer introduce? How well can the system be governed, observed, and secured?

These are no longer side issues. In enterprise environments, they are central.

Cloudflare’s Code Mode matters because it addresses several of them at once. It reduces prompt overhead. It compresses multi-step work into executable plans. And it places that execution inside a bounded environment rather than leaving it open-ended.

That combination is what makes the announcement worth watching.

Why supply chain and logistics leaders should care

This development is especially relevant in supply chain and logistics because operational workflows in those environments are rarely simple.

A useful agent in a supply chain context may need to inspect order status, review inventory conditions, check shipment events, retrieve policy or contract information, evaluate alternate actions, and trigger the next step. That is not a one-tool workflow. It is a chained execution path that often spans multiple systems and multiple decision points.

This is where flat tool-calling architectures can become cumbersome.

That does not mean every supply chain software provider should immediately adopt a code-based execution pattern. But it does reinforce a broader point: as AI moves deeper into planning, execution, and exception management, the interaction model becomes strategically important. The question is no longer just whether an agent can help a planner, analyst, or operator. The question is whether the architecture around that agent can support real operational work without becoming slow, expensive, or brittle.

That is highly relevant in logistics, where fragmented systems, exception handling, and time-sensitive workflows are everyday realities.

Security is part of the architecture

One of the more credible aspects of Cloudflare’s approach is that execution happens inside a constrained sandbox.

That should not be treated as a secondary detail. It is central to enterprise adoption.

If AI agents are going to write and execute code, even in narrow ways, enterprises will need confidence that those actions are bounded, observable, and policy-aware. Efficiency alone will not be enough. A fast agent that cannot be governed is not an enterprise architecture.

Too much of the current market still focuses on what agents can do without talking seriously enough about the boundaries around how they do it. Cloudflare’s design is notable in part because it treats security and control as part of the architecture, not as cleanup work for later.

That is the right direction.

A signal for enterprise software providers

There is also a broader product signal here.

If agents are going to become an important interface layer for enterprise systems, then software platforms may need to rethink how capabilities are exposed. Traditional APIs were built primarily for human developers and conventional system integrations. Agent-facing architectures may require better searchability, tighter abstractions, clearer permissions, and more deliberate execution boundaries.

In that sense, Cloudflare’s announcement is more than a token-efficiency story. It is an early indication that the industry may need a better control plane for agents.

Final thoughts

Cloudflare’s Code Mode MCP server should not be viewed only as a clever way to reduce token usage.

It is better understood as an architectural signal.

As enterprise AI agents move into larger and more operational environments, simply exposing more and more tools to the model is unlikely to be the best long-term pattern. A more scalable approach is to reduce what the model has to carry in context, improve how it discovers relevant capabilities, and allow it to execute bounded workflows inside a controlled runtime.

That is the deeper significance of this launch.

The future of enterprise AI will not be decided by polished demos with a handful of tools. It will be shaped in complex, multi-system environments where orchestration, control, and efficiency matter as much as model quality.

The post Cloudflare’s Code Mode Signals a Better Architecture for Enterprise AI Agents appeared first on Logistics Viewpoints.

Non classé

The Home Depot Buys SIMPL Automation to Speed Fulfillment and Tighten DC Performance

Published

3 jours agoon

17 avril 2026By

The deal signals a continued push to use automation, AI, and denser storage design to improve delivery speed, labor efficiency, and product availability.

The Home Depot has acquired SIMPL Automation, a Massachusetts-based provider of warehouse automation and technology systems, as the retailer continues to invest in faster, more efficient fulfillment operations.

The move follows a pilot at Home Depot’s Locust Grove, Georgia distribution center. According to the company, the pilot improved pick speed, shortened cycle times, and reduced product touches. SIMPL also brings a patented storage and retrieval solution designed to increase storage density inside the distribution center. That should help Home Depot position more high-demand inventory closer to the customer and support faster delivery.

“We’re focused on providing the best interconnected experience in home improvement by having products in stock and ready to deliver to our customers whether it’s to the home or jobsite,” said Amit Kalra, senior vice president of supply chain at The Home Depot. “By bringing SIMPL’s industry-leading automation into our operations, we’re accelerating the flow of products through our distribution network to deliver with unprecedented speed and precision.”

The strategic logic is straightforward. Retailers are under continued pressure to improve service levels while also protecting margins. That makes distribution center automation more than a labor story. It is now tied directly to throughput, storage utilization, inventory positioning, and delivery performance.

Home Depot framed the acquisition as part of a broader supply chain innovation agenda that includes AI-powered inventory management, advanced analytics, mobile technology, automation, and live delivery tracking. SIMPL fits neatly into that effort. Its value is not just in automating tasks, but in improving the overall flow of goods through the network.

This matters because fulfillment speed is increasingly determined inside the four walls. Faster picks, fewer touches, and denser storage can materially improve network responsiveness without requiring entirely new infrastructure. In that sense, the acquisition is not just about mechanization. It is about tighter execution.

There is also a second point worth noting. Home Depot is acquiring a capability it already tested in its own environment. That lowers adoption risk and suggests this was not a speculative technology purchase. It was an operationally validated one.

For supply chain leaders, this is another sign that warehouse automation is becoming a more central part of retail network strategy. The winners will not simply automate for its own sake. They will deploy automation where it improves flow, reduces friction, and helps place the right inventory closer to demand.

The post The Home Depot Buys SIMPL Automation to Speed Fulfillment and Tighten DC Performance appeared first on Logistics Viewpoints.

What Tesla Reveals About Vertical Integration in Supply Chains

What the Latest IEA Update Says About Energy Risk, Supply Chains, and Industrial Strategy

Cloudflare’s Code Mode Signals a Better Architecture for Enterprise AI Agents

Walmart and the New Supply Chain Reality: AI, Automation, and Resilience

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

13 Books Logistics And Supply Chain Experts Need To Read

Trending

-

Non classé1 an ago

Non classé1 an agoWalmart and the New Supply Chain Reality: AI, Automation, and Resilience

- Non classé6 mois ago

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

- Non classé8 mois ago

13 Books Logistics And Supply Chain Experts Need To Read

- Non classé3 mois ago

Container Shipping Overcapacity & Rate Outlook 2026

- Non classé5 mois ago

Ocean rates climb – for now – on GRIs despite demand slump; Red Sea return coming soon? – November 11, 2025 Update

- Non classé2 mois ago

Ocean rates ease as LNY begins; US port call fees again? – February 17, 2026 Update

- Non classé1 an ago

Unlocking Digital Efficiency in Logistics – Data Standards and Integration

-

Non classé6 mois ago

Non classé6 mois agoNavigating the Energy Demands of AI: How Data Center Growth Is Transforming Utility Planning and Power Infrastructure