Non classé

Navigating the Energy Demands of AI: How Data Center Growth Is Transforming Utility Planning and Power Infrastructure

Published

7 mois agoon

By

Powering data centers is a challenge for utilities.

Data centers are highly valued by utilities because they consume large amounts of electricity with consistent, predictable demand patterns that remain steady throughout both the day and the year.

The explosive growth in power demand, driven largely by Artificial Intelligence (AI) and cloud computing, has overwhelmed the traditional electrical grid planning and construction timelines.

Introduction

New hyperscale data centers often require 100 MW to 500 MW of power, which is the demand of a small to medium-sized city. Utilities are happy to accept new business, but the problem is that data center developers want this power now and utilities are not prepared to respond so quickly. Expanding transmission and substation capacity through utilities can take 5 to 10 years due to lengthy processes for planning, permitting, environmental reviews, and construction. Data center developers, especially those focused on the AI race, prioritize “time to power” above almost all else. Delays mean lost competitive advantage and revenue. Developers are willing to pay a premium for faster power access and have taken some new and unique approaches for powering data centers.

The need for gigawatts of power on tight deadlines has forced data center developers to become major energy developers. They are doing this in three main ways:

Funding Renewables via PPAs: Hyperscalers like Amazon, Microsoft, and Google are the world’s largest corporate buyers of clean energy. Their long-term Power Purchase Agreements (PPAs) provide the financial certainty needed for developers to build hundreds of new utility-scale wind and solar farms.

On-Site, Grid-Independent Power: To bypass multi-year grid connection queues, developers are building their own on-site power. They have purchased natural gas turbines, fuel cells, and co-located them next to renewable power, independently of the local utility.

Direct Connections to Power Plants: Data center campuses are now being planned and built adjacent to existing power plants. There are several major data center developers like Microsoft, Google, Meta, and Amazon web services that have signed PPA’s for existing nuclear power, like the Microsoft deal for a 20-year PPA to enable the restart of the shuttered Three Mile Island reactor in Pennsylvania. There is interest and research into PPA’s for new SMR, advanced, and full-scale nuclear power

Example of the new paradigm

The massive xAI “Colossus” data center project in Memphis, Tennessee, showcases a new paradigm for building AI infrastructure at incredible speed. To rapidly meet the massive power demands of the Colossus data center, xAI used portable or mobile natural gas-powered turbines which are typically used for disaster recovery or fast, temporary power generation. This resulted in legal challenges from environmental groups regarding air quality permits and were eventually removed.

Initial reports mentioned around 18-20 turbines, but later aerial images suggested as many as 35 turbines were installed and operating, with a combined capacity estimated at over 70 MW, though the total demand for Phase I was 150 MW. The TVA (Tennessee Valley Authority) Board of Directors officially approved the plan to supply a total of 150 MW of power to the xAI facility in November 2024.

The connection to the full 150 MW load required the construction of a new electric substation near the data center, which was paid for by xAI. By May 2025, the massive Colossus supercomputer facility was connected to the new substation, providing it with 150 MW of power from the MLGW/TVA grid.

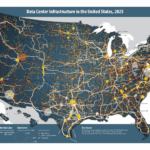

The map shows where new data centers are being built.

Data Centers planned in the US

While many data center plans are secrets, current expansion announcements focus on regions like:

Northern Virginia (Ashburn/Loudoun & Prince William Counties): The largest existing and planned capacity globally.

Phoenix, Arizona (Maricopa County): A major emerging market with high growth projected.

Dallas-Fort Worth (DFW), Texas: Significant planned growth.

Atlanta, Georgia (I-85 Corridor): High percentage growth projected, with major new investments.

Salt Lake City, Utah: A fast-growing secondary market.

Impact on utilities and power costs.

There is fierce competition to build and power data centers unlike anything we have seen in the utility industry before, but there is also significant new power growth due to the growing power demands for electric powered transportation (mostly electric passenger cars) and to a lesser extent the electrification of HVAC and industrial electrification. The increased demand for power requires new utility investment in transmission, substations, and distribution.

The generation side is split between vertically integrated regulated utilities and Independent Power Producers (IPPs). Independent Power Producers (IPPs) have generally dominated the buildout of new capacity (especially renewables and battery storage), particularly in deregulated markets, because they can respond to market price signals and secure private long-term contracts (PPAs) faster than utilities navigating regulatory approval cycles.

Utilities remain the primary developers in the regulated markets and are also heavily investing in transmission and distribution infrastructure across all markets to physically connect the new generation built by both themselves and IPPs.

With data centers buying and building power there is a supply and demand issue that is driving up the cost of power. A small utility or municipal power company without generation buys power from IPP’s or other utilities suppliers and is competing with the data centers.

Utilities see data centers as great customers. They buy lots of power with steady daily and seasonal loads. They match up well to base load generators like nuclear or coal power and do not require oversized transformers or wires like a large level 3 EV charging facility would need. Of course, data center developers are concerned about power costs and new data centers have many ways they can be better customers and get better power rates from utilities. About 40% of the data center power goes to HVAC. There are ways of using thermal batteries to shift the HVAC load away from costly peak power hours typically 5-9pm There is a trend for data centers to transition to large grid scale batteries that are replacing the traditional UPS batteries. Such batteries can provide useful grid services to utilities as well as provide backup power to the data center. A town or utility that adds data centers to their grid will gain revenue for power sold. More revenue helps to cover the large overhead costs that utilities have for wires, poles, truck, staff, and buildings. This can reduce the overall cost of power in such towns or utility service areas

The Leading AI Model Developers

1. OpenAI (in partnership with Microsoft). Flagship Products: The GPT series ChatGPT Microsoft is their primary investor and exclusive cloud partner, integrating OpenAI’s models deeply into their own products like the Azure cloud platform and Microsoft Copilot.

2. Google (specifically Google DeepMind) Flagship Product: The Gemini family of models (including Gemini Pro, Ultra, and future versions).

3. Meta (formerly Facebook). Flagship Product: The Llama series of models (e.g., Llama 3).

4. Anthropic Flagship Product: The Claude family of models (e.g., Claude 3, Claude 3.5 Sonnet). They are a major competitor to both OpenAI and Google and are heavily backed by Amazon and Google.

5. xAI Flagship Product: Grok. Founded by Elon Musk, xAI aims to create an AI to “understand the true nature of the universe.”

6. DeepSeek AI. Flagship Product: The DeepSeek model family (e.g., DeepSeek-V2). They are a leading Chinese AI research lab that has released a series of extremely powerful open-source models that are highly regarded, particularly for their exceptional coding and mathematical reasoning capabilities.

Is there an investment bubble like the dot com bubble?

The short answer yes, the massive overspending by companies like Meta will shift from being first at all costs to a more rational return on investment criterion. However, the race is not stopping, and it is unlikely to see the AI race coming to a halt. Current spending projections are:

2025: ~$400 Billion The spending in 2025 is dominated by the massive capital investment in building the physical infrastructure for AI. Data center construction and the procurement of tens of billions of dollars’ worth of NVIDIA GPUs and other AI accelerators represent the largest share of this cost.

2026: ~$550 Billion The rapid year-over-year growth is driven by the ongoing AI arms race. As new, more powerful AI models are released, the demand for even larger data centers and next-generation GPUs continues to accelerate. Spending on the electrical infrastructure to power these facilities becomes a major and growing line item.

2030: Over $1.5 Trillion The leap to a multi-trillion-dollar run rate by 2030 is based on the widespread enterprise adoption of AI. By this time, spending will shift from being concentrated among a few hyperscaler’s to being broadly distributed as thousands of companies build their own smaller AI systems and pay for massive amounts of AI-powered cloud services.

Electric Power: This is the fastest-growing operational cost. Powering the millions of GPUs in these data centers is projected to become a multi-hundred-billion-dollar annual expense by the end of the decade, making energy the primary long-term bottleneck for AI growth.

The race to develop the best AI applications that will provide your news, your library, your entertainment, your education, and maybe even your companionship. The AI investment race is showing early signs of potential market saturation and risk, but it is unlikely to subside completely due to fundamental differences from the dot-com bubble. Instead, most analysts predict a shift toward consolidation, disciplined spending, and a focus on profitability. The shake out could result in a small group of winners emerging, but the money for better AI models and new applications will keep flowing. This “AI Oligopoly” may be the current hyperscalers: Microsoft/OpenAI, Google, Amazon (with Anthropic), and Meta. The prize is not primarily scientific or industrial AI. It is about owning influence: I.e. the source of truth, knowledge, advertising, guiding your purchases, owning your news, owning your screen time, being your trusted teacher, partner, and friend. Having the best AI frontier model and model user interfaces is the key to success.

Factor

AI Investment Race

Outlook

Pace of Investment

Driven by an “AI arms race” where companies fear losing more than they fear overspending. This urgency is causing massive, debt-fueled spending on chips and data centers.

Likely to Slow/Correct. Infrastructure spending cannot increase indefinitely. Goldman Sachs and others predict an “inevitable slowdown” in data center construction, which will impact chip and power suppliers.

Productivity Gap

A significant gap exists between the trillions being invested in AI infrastructure and the proven, monetized revenue from AI applications.

Consolidation is Coming. Many smaller, unprofitable AI application startups are likely to fail or be acquired, similar to the dot-com era, as capital becomes more disciplined.

Technological Potential

The underlying technology (AGI/generative AI) is widely seen as genuinely transformational (a technological revolution).

Unlikely to Subside. The technology will not fail; the business models and valuations built upon it are the primary risk. Investment will pivot from “build it all now” to “build what is profitable.”

Conclusion and Outlook

The unprecedented demand in the US for lightning-fast power connections by developers of data centers is not matching traditional ways utilities provide power to new customers. As a result, there are a range of new and creative ways to provide that power. Developers are building their own power generation and microgrids. Data centers are becoming power companies themselves. They are building large BESS battery systems that not only provide for UPS power backup but provide grid services to utilities. Utilities and data center developers are collaborating on building new power generation, new or upgraded substations, and the power lines to meet the power and reliability requirements of data centers.

Data centers are a prized customer for utilities, they consume lots of steady power around the clock and throughout the seasons and they often have far more flexibility to provide ancillary services to the utility than typical residential, commercial or industrial customers. While they are schedule driven, they are less sensitive to the price of power in the short term as the AI race has focused on securing power faster than competitors to get the best AI models sooner and lock in a customer base with superior AI applications.

Hyperscalers have created shorter term PPAs for fossil power and long term PPA’s for massive quantities of renewable power and have memorandums of understanding for future nuclear power that may come from new SMR and advanced reactors. While data center loads match up well to base load generation like nuclear or coal, they are often powered by intermittent generation like solar and wind with battery storage.

Data center developers seek out locations that can provide power quickly, have the water and land resources needed and where local zoning and community are favorable. They are also building where it will be easy to expand in the future.

EV batteries are trending to charge at faster rates. Large high voltage DC EV charging stations can require massive power to charge dozens of cars simultaneously and utilities need a strong grid to service this growing load. Most EV charging occurs at home and distribution utilities are adapting to new loads with more powerful transformers and related low and medium voltage distribution infrastructure. New loads for HVAC and industrial electrification are steadily increasing over the next decade and beyond.

AI developers need more than just electric power to win the AI race. They need to train on accurate but diverse curated data. This includes selecting the most appropriate model architecture and employing techniques like Active Learning (to find the most useful data to train on) and Data Distillation (to reduce the size of the dataset without losing quality). They start with peta-bytes of data from public, private, and internally generated sources. This massive raw data pool is labeled, filtered, cleaned, and tokenized (broken down into the pieces the model understands). This step dramatically reduces the final size of the data AI uses for training. Data centers also need secure, reliable, and fast data connectivity.

The US is behind in securing new power. China already has a grid that is larger than the US and European grids combined and while NVIDIA GPU chips are restricted, China is in a far better position to provide power to AI Data centers compared to the US. The table below shows estimated grid power additions to 2030, and China is outpacing the US in every power sector.

Grid Energy

Global Additions in 2024 (GW)

US Additions 2025 to 2030

i.e., five years (GW)

China Additions 2025 to 2030

i.e., five years (GW)

Global Additions 2025 to 2030

i.e., five years (GW)

Solar

452

220 to 270

1,200 to 1,500

3000 to 4000

Wind

113

60 to 75

400 to 500

600 to 700

Coal

44.1

-50 to -70

120 to 180

160 to 240

Gas and Oil

25.5

25 to 35 GW

70 to 100

190 to 260

Hydro

24.6

2 to 4

60 to 80

125 to 175

Nuclear

6.8

~2 GW (uprating only)

30 to 40

50 to 70

Biofuel

4.6

1 to 2

8 to 10

30 to 40

Geothermal

0.4

2 to 3

2 to 3

10 to 15

Recent US policies are discouraging solar, wind, and battery storage, which is slowing the deployment of the cheapest, cleanest, and fastest deploying sources of new power. US policy is supporting more gas and nuclear power, but new gas power plants have supply chain constraints like gas turbines, so these power sources are not matching the demands of data center developers. This constrained power supply threatens to inflate electricity prices for consumers and businesses and risks leaving the nation unable to cleanly and affordably meet the surging power demands of data centers and broader electrification.

The post Navigating the Energy Demands of AI: How Data Center Growth Is Transforming Utility Planning and Power Infrastructure appeared first on Logistics Viewpoints.

You may like

Non classé

Modern Cost Engineering Evolution: Rewiring the Human Element for Supply Chain Resilience

Published

16 heures agoon

5 mai 2026By

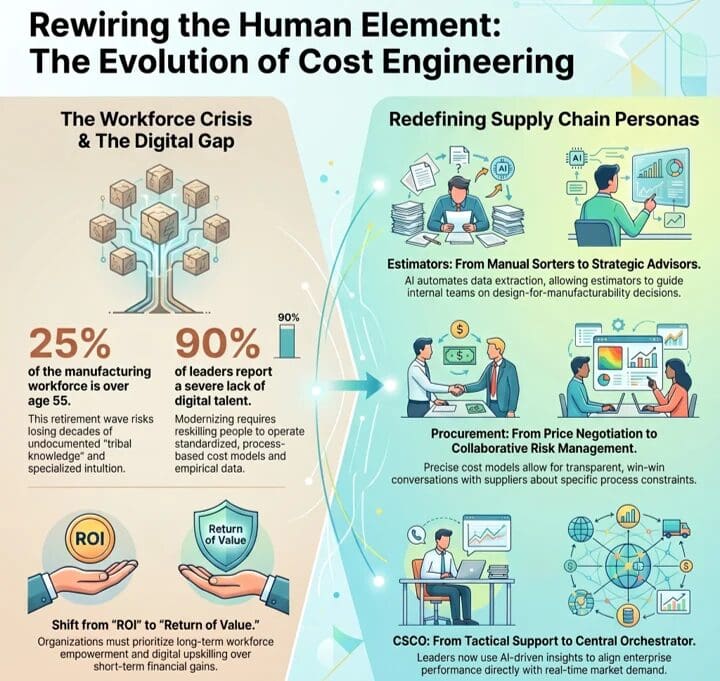

In my previous blog outlining the adoption of cost engineering, I explored the dynamics behind the market move away from sole reliance on traditional, backward-looking cost estimating to one that also incorporates modern “should-cost” methods. The reasons are many, of course, but it is clear that industrial organizations are keen to use AI-driven methods and other digital tools to build much stronger layers of resilience and competitive advantage necessary to compete in today’s hyperconnected economies.

Although digitally enabled results can sometimes be achieved in an operational vacuum, digital maturity cannot. The former can demonstrate benefits like efficiency, cost reduction, safety, etc., but it will rarely scale. The latter delivers market success via competitive excellence, providing a means for better organizing the business and orchestrating the ecosystem to anticipate and meet modern market signals.

Modernizing the supply chain is, at its core, a human-centered endeavor. The successful integration of cost engineering demands significant realignment and reskilling of people. As I began discussing almost a decade ago, the workforce transformation required to modernize is certainly the most difficult endeavor a business will face.

In this blog, I’ll dive into the human element of cost engineering. I’ll touch on how roles and attendant knowledge, skills, and abilities (KSAs) across the supply chain are evolving, discuss the cultural hurdles organizations must navigate, and outline how companies can transform traditional estimators into strategic consultants.

Tribal Knowledge: I Feel Like I’ve Been Here Before

Leadership must address the workforce crisis currently confronting industrial manufacturing. Look at any credible information resource and the numbers are basically the same. Whole industries are facing rapid workforce retirements, with approximately 25 percent of the total manufacturing workforce already over the age of 55. Within small and medium-sized enterprises, which form the bedrock of the industrial manufacturing supply base, particularly in North America, between 30 and 40 percent of business owners and skilled operational workers are nearing retirement age. Ouch.

And yet we’ve known this has been underway for quite some time, but here we are. Historically, the reaction to tribal knowledge was wariness. I recall many conversations with leadership and frontline workers as technologies such as machine learning were initially deployed. Tribal knowledge, expertise, and the workforce that owned it were often treated as a nut to be cracked and the insides taken. Initially, the shell was perceived to be obstinately hard, with workers guarding their critical expertise, including core intellectual property (IP), as a means of fending off obsolescence. It didn’t lend itself to, shall we say, everyone pulling in the same direction.

Supply chain was no exception to this pattern. Cost estimating relied heavily on the undocumented tribal knowledge and personal experience of veteran employees. As these experts exit the workforce, they take decades of specialized intuition with them, leaving organizations highly vulnerable.

As a result, a new discipline has taken hold, as tribal knowledge is likely to be unretrievable in many instances or, in situations where leaders show a lack of humility, downsized too quickly. Modern cost engineering takes aim squarely at the reliance on human memory with standardized, process-based cost models and empirical data. Yet, an overwhelming 90 percent of supply chain leaders report a severe lack of the digital talent required to operate these new systems. Here we are, again, back to the ever-important human element at the center of a technology endeavor.

Redefining Supply Chain Personas

Rather than taking the same, lose-lose historical approach to cracking tribal knowledge, leading organizations are pivoting workers away from the manual, unsafe, and repetitive. What they are doing differently, though, is concertedly moving subject matter experts toward higher-level orchestration and critical oversight. It won’t pan out with every worker, certainly, but it will ensure that the expertise is retained and applied to creating more strategic value. On the surface, that presents much more opportunity for a win-win scenario. Here is how some specific roles are evolving:

Estimator

Historically, manufacturing estimators spent most of their time immersed in manual, backward-looking work. They pored over static 2D PDFs, visually interpreted complex 3D CAD models, and stitched together cost assumptions from disconnected spreadsheets. Much of their value came from patience and pattern recognition rather than insight, and the process was slow, reactive, and highly dependent on individual experience. For leading companies that are aggressively implementing cost engineering processes, that is radically changing.

In the world of cost engineering, this role is now that of a strategic advisor. Leveraging AI to automate much of the data extraction that once consumed their time, this role develops models to identify cost drivers based on real manufacturing constraints and material behavior. As a result, this role now focuses more on guiding internal teams on design-for-manufacturability decisions and outlining strategic trade-offs that can include a mix of potential metrics, such as cost, lead time, and, increasingly, carbon impact.

Procurement

Procurement has primarily been about transactional efficiency and negotiation. Success was generally determined by price, often with significant visibility limitations into how the price was constructed. Framed within cost engineering, procurement is driven by collaboration and risk management. Using precise cost models, sourcing conversations begin with a clear understanding of cost, informed by specifics on materials, labor, processes, and capacity constraints. If a supplier’s quote exceeds cost expectations, conversations can then be had specifically about how to target specific constraints, such as inefficiencies in process or materials. The objective is to provide transparency that allows for a win-win relationship in terms of performance, profitability, and reliability.

Frontline

Despite the best of intentions to change the reactive nature of the role, frontline work has been dominated by manual execution and post-problem decision-making. Operators were tasked with keeping machines running, responding to breakdowns as they occurred, and relying heavily on tribal knowledge passed down informally and gained over time. Cost engineering shifts the dynamic for frontline workers. Upstream processes and systems provide precision that is communicated to these workers in terms of production expectations. Operators are tasked with supervising processes, identifying deviations, and capturing machine-level issues as they occur. As these workers become more connected and augmented via technology, faults and anomalies are logged digitally, with automated routing to maintenance or engineering as needed. With effective cost engineering, the frontline workforce ensures production aligns with cost and performance expectations.

Chief Supply Chain Officer (CSCO)

In the past, supply chain leadership was back-office oriented, using historical information to attempt to optimize logistics execution, inventory control, and cost. Their influence was significant but fairly tactical. That orientation shifts significantly with cost engineering as the CSCO becomes the central orchestrator of enterprise performance, based on the organization’s ability to align with market demand. Supply chain data increasingly impacts revenue and margin stability, based on market responsiveness. As a result, the CSCO sits at the intersection of strategy, technology, and execution, with an increased mandate that expands beyond moving goods to shaping how the organization makes decisions. In an organization using cost engineering, CSCOs are redesigning roles, workflows, and governance models, based on AI-driven insights that orchestrate decision-making across the enterprise and ecosystem.

Aversion to Change: You Can’t Take the Human Out of, Well, the Human

So, implementing cost engineering seems like an obvious win. Despite the obvious operational benefits, integrating cost engineering introduces complex modernization challenges. Of course, these challenges are mostly rooted in aversion to change. It’s a pretty understandable problem, with generations of workers having been trained on historically based methods and having spent entire careers honing a requisite expertise. To them, AI and automated decision-making are met with deep suspicion, rightfully grounded in the fear that technology will replace jobs and render their expertise irrelevant. They are not wrong. This challenge has been exacerbated by leadership deploying complex new software without context. In reaction to these poorly orchestrated, technology-centric changes, operators bypass the systems and revert to familiar methods and tools, neutralizing investment and anticipated benefits. Pilot purgatory, anyone?

To counter this within the organization, leadership must employ empathy, transparency of intent, continuous learning, and AI explainability that enables humans to trust machines and the logic behind their decisions. From an external perspective, organizations also need to understand that they are only as strong as their weakest supplier. Leading companies gain their status by subsidizing the digital and cybersecurity capabilities of their ecosystem. It becomes a case of a rising tide lifting all boats.

Return of Value

Deploying cost engineering cannot be about eliminating the human workforce through automation. It relies on a human-on-the-loop model, but it defers to technology to manage massive data complexity. The role of expert workers is to apply contextual judgment and engage in continual collaboration. The transition to this approach requires transparency and significant digital upskilling that will likely feel uncomfortable initially. Due to the step change required in this shift, organizations need to define and align with a return of value rather than shorter-term return on investment. By empowering the workforce and supply chain ecosystem to employ data-driven precision, the organization transitions from a guesswork culture to one of definable competitive differentiation.

In blog three of this series, I’ll explore the process component of the equation. I’ll focus on departmental silos, cross-functional teams, and supply chain orchestration. You can read the first blog in this four-part series here.

The post Modern Cost Engineering Evolution: Rewiring the Human Element for Supply Chain Resilience appeared first on Logistics Viewpoints.

Non classé

Amazon Launches “Supply Chain Services” Leveraging its Global Logistics Network

Published

21 heures agoon

5 mai 2026By

Amazon has officially launched Amazon Supply Chain Services, opening its integrated logistics network to businesses of all sizes and across all industries. This move expands the company’s existing logistics capabilities beyond its own marketplace and selling partners, offering a comprehensive suite of services that covers the entire journey of a product from origin to the final customer.

The platform bundles multiple logistics capabilities into a single network:

Freight: Access to multimodal transportation, including air, ocean, rail, and ground. This service includes support for customs clearance, booking, and end-to-end shipment visibility.

Distribution and Fulfillment: A centralized storage and distribution system that allows companies to manage inventory across various sales channels, including wholesale, direct-to-consumer, social media, and physical storefronts, from a unified inventory pool.

Parcel Shipping: An expansive delivery network providing ground shipping with two-to-five-day delivery speeds and seven-day-a-week service.

This rollout is designed to provide businesses with the infrastructure and technology that powers Amazon’s own operations. By decoupling these services from its retail arm, Amazon is positioning its logistics network as a utility, similar to the model used for Amazon Web Services. The goal is to address the complexity of supply chain management by replacing fragmented, multi-provider contracts with a single, end-to-end interface.

The platform is already used by enterprise-level organizations, including Procter & Gamble, 3M, Lands’ End, and American Eagle Outfitters. These companies are utilizing the network for various logistics needs, ranging from moving raw materials to distribution centers to fulfilling end-user orders. The infrastructure is scaled to support high volumes, currently moving approximately 13 billion items annually.

By centralizing freight, distribution, and last-mile delivery, Amazon Supply Chain Services aims to simplify supply chain operations, improve inventory positioning, and offer the reliability of a mature global logistics network to commercial entities, regardless of where they sell their products.

The post Amazon Launches “Supply Chain Services” Leveraging its Global Logistics Network appeared first on Logistics Viewpoints.

Non classé

AI in the Supply Chain: From Architecture to Execution

Published

24 heures agoon

5 mai 2026By

The next phase of supply chain AI will not be defined by better models alone. It will be defined by whether those models can improve real decisions across planning, logistics, sourcing, fulfillment, and risk management.

Artificial intelligence has moved quickly through the supply chain conversation.

The first wave focused on what AI could do. Could it improve forecasts? Detect disruptions? Summarize documents? Support planners, buyers, dispatchers, and customer service teams?

Those were useful questions. They helped establish the architectural foundation for AI-enabled supply chains: agent-to-agent communication, retrieval-augmented generation, graph-based reasoning, persistent context, and more interoperable data environments.

But architecture is not execution.

The harder question now is whether AI can improve real operating decisions inside complex supply chains. These are decisions involving cost, service, inventory, capacity, risk, customer commitments, physical assets, and financial consequences.

A model may forecast demand. A visibility platform may detect a disruption. An agent may recommend a response. None of that matters much unless the organization can turn the signal into coordinated action.

That is the focus of this new Logistics Viewpoints series.

From AI Capability to Operational Decision-Making

The first phase of supply chain AI was about capability. The next phase is about consequence.

Supply chains are not abstract information systems. They are physical operating networks. A transportation decision changes cost and service. An inventory decision affects availability and working capital. A sourcing decision changes risk exposure. A warehouse decision changes labor, throughput, and customer performance.

This is where many AI programs stall.

They produce insight, but the workflow does not change. They generate recommendations, but decision ownership remains unclear. They detect exceptions, but the organization still responds through manual handoffs, email chains, spreadsheets, and delayed escalation.

The result is decision latency: the gap between when a condition changes and when the organization executes a coordinated response.

In volatile supply chain environments, decision latency is not just an inconvenience. It becomes a structural weakness.

Why the Decision Intelligence Layer Matters

Enterprise supply chain technology has long been organized around systems of record and systems of planning.

ERP, WMS, TMS, order management, procurement, and planning platforms remain essential. They preserve transactions, manage workflows, and support structured planning processes.

AI introduces the need for another layer: a decision intelligence layer.

This layer does not replace existing systems. It operates across them. It connects signals, context, reasoning, governance, and execution. It helps the enterprise evaluate conditions continuously, understand tradeoffs, and support or initiate action within defined boundaries.

That distinction matters.

Not every AI system should be allowed to operate near physical or financial consequence. The closer AI gets to execution, the greater the need for context, determinism, governance, auditability, and human oversight.

Supply chain AI is not one category. It is a set of capabilities that must be matched to the decision environment in which they operate.

What the Series Will Cover

This ten-part series examines how supply chain AI moves from technical architecture to operational execution.

The series will cover:

1. From Capability to Execution

Why the supply chain AI conversation is moving beyond pilots, demonstrations, and technical capability toward measurable operational impact.

2. The Decision Bottleneck

How fragmented systems, functional handoffs, and delayed escalation create decision latency across modern supply chains.

3. From Systems of Record to Systems of Decision

Why AI adds a new decision layer above ERP, planning, TMS, WMS, and visibility platforms.

4. Operational AI Requires Action Pathways

Why AI insight has limited value unless it connects to workflows, owners, thresholds, execution systems, and feedback loops.

5. Five Requirements for Operational AI

The operating requirements that separate useful AI from AI theater: decision-ready data, contextual intelligence, action pathways, governance, and closed-loop learning.

6. From Agent Communication to Coordinated Execution

Why agentic AI matters only if it improves cross-functional coordination, not simply because agents can communicate.

7. Context Becomes a Requirement

Why supply chain AI must understand history, supplier performance, customer commitments, contracts, network dependencies, and prior exceptions.

8. Planning and Execution Are Converging

How AI changes the cadence of supply chain management by embedding planning logic inside execution workflows.

9. Market Structure: From Functional Software to Decision Architectures

Why buyers should increasingly evaluate technology providers by the decisions they improve, not only by the software category they occupy.

10. Operating Model Implications

How decision-centric AI changes roles, metrics, governance, accountability, and the future work of supply chain planners and operators.

The Buyer Question Is Changing

For years, supply chain technology evaluation has often started with functional categories.

What does the system do? Is it a planning platform, TMS, WMS, visibility solution, risk platform, procurement tool, or analytics application?

That question still matters. But it is no longer sufficient.

The more important question is becoming: what decisions does this system improve?

Does it improve replenishment decisions? Transportation decisions? Supplier risk decisions? Inventory allocation decisions? Customer commitment decisions? Exception resolution decisions?

And just as important: how does the recommendation connect to execution?

This is where the market is moving. Planning vendors, execution platforms, visibility providers, risk intelligence solutions, and enterprise software companies are all embedding AI more deeply into their offerings. Their starting points differ, but the direction is consistent.

The market is shifting from functional software toward decision-centric architectures.

That shift will create opportunity, confusion, and new evaluation challenges for buyers.

Why This Matters Now

Supply chain leaders are not short on AI claims.

They are short on proof.

They need to know where AI can improve real decisions, where it should remain advisory, where autonomy is inappropriate, and where governance needs to be built before scale.

They also need a practical way to separate serious operational AI from generic AI positioning.

That requires a more disciplined conversation. Not just about models. Not just about agents. Not just about data. But about decision environments, operating consequences, and the architecture required to move from insight to action.

Closing CTA

Logistics Viewpoints and ARC Advisory Group are examining how decision intelligence, agentic AI, contextual reasoning, and next-generation supply chain architectures are reshaping supply chain technology markets.

Follow this ten-part series on Logistics Viewpoints as we examine how supply chain AI is moving from architecture to execution.

We will listen to your situation, offer a candid outside perspective, and, where appropriate, suggest practical next steps or areas where ARC research and advisory support may help.

The post AI in the Supply Chain: From Architecture to Execution appeared first on Logistics Viewpoints.

Modern Cost Engineering Evolution: Rewiring the Human Element for Supply Chain Resilience

Amazon Launches “Supply Chain Services” Leveraging its Global Logistics Network

AI in the Supply Chain: From Architecture to Execution

Walmart and the New Supply Chain Reality: AI, Automation, and Resilience

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

13 Books Logistics And Supply Chain Experts Need To Read

Trending

-

Non classé1 an ago

Non classé1 an agoWalmart and the New Supply Chain Reality: AI, Automation, and Resilience

- Non classé7 mois ago

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

- Non classé9 mois ago

13 Books Logistics And Supply Chain Experts Need To Read

- Non classé3 mois ago

Container Shipping Overcapacity & Rate Outlook 2026

- Non classé3 mois ago

Ocean rates ease as LNY begins; US port call fees again? – February 17, 2026 Update

- Non classé6 mois ago

Ocean rates climb – for now – on GRIs despite demand slump; Red Sea return coming soon? – November 11, 2025 Update

- Non classé1 an ago

Unlocking Digital Efficiency in Logistics – Data Standards and Integration

-

Non classé1 an ago

Non classé1 an agoAmazon and the Shift to AI-Driven Supply Chain Planning