Non classé

Securing the Chain: Data Integrity and Confidentiality in a Shared Ecosystem

Published

3 mois agoon

By

Call to Action: Download the full guide to gain in-depth insights and practical frameworks that will help you lead the transformation towards a resilient supply chain.

Part 6

In today’s hyperconnected supply chains, data is the currency of trust. It drives procurement decisions, inventory planning, shipping schedules, customs clearance, customer communications, and financial transactions. Yet as data flows seamlessly between thousands of partners, its integrity and confidentiality are increasingly at risk.

When data is corrupted, manipulated, or exposed, the consequences ripple across entire ecosystems. A falsified shipment manifest can paralyze ports. A leaked product design can destroy competitive advantage. A manipulated sensor feed can halt factory production.

Executives must therefore focus not only on protecting systems but on safeguarding the trustworthiness of the data itself.

1. Why Data Integrity Matters

Data integrity ensures that supply chain decisions are accurate, reliable, and tamper-proof.

Operational impact: Corrupted demand forecasts cause overproduction or shortages.

Regulatory impact: Inaccurate customs filings can result in fines and shipment delays.

Financial impact: Manipulated invoices can trigger fraudulent payments.

Reputational impact: Leaked product designs or supplier contracts erode trust.

For supply chains, integrity breaches are not abstract IT issues, they translate directly into physical disruption and financial loss.

2. The Expanding Data Attack Surface

Modern supply chains exchange information through:

ERP, WMS, and TMS systems

EDI (Electronic Data Interchange) feeds

Blockchain platforms

IoT and OT sensor data

Cloud collaboration tools

Each point of exchange introduces risk. Attackers exploit:

Data poisoning: Corrupting AI training data for demand planning or logistics optimization.

Man-in-the-middle attacks: Intercepting and altering documents in transit.

API manipulation: Exploiting insecure APIs between partners.

Insider leaks: Employees selling or leaking confidential information.

The attack surface expands with every new supplier, integration, and cloud service.

3. Confidentiality in Shared Ecosystems

Supply chains depend on collaboration, but collaboration requires sharing sensitive data: designs, volumes, schedules, customer lists, prices. The challenge: how to share enough to enable efficiency while keeping confidentiality intact.

Executives must consider:

Who has access: Suppliers, subcontractors, freight forwarders, customs authorities.

What is shared: Only necessary fields, not full datasets.

How it’s shared: Encrypted channels, tokenized identifiers, anonymized customer details.

How it’s stored: Controlled environments, strict access logs, secure cloud.

The principle should be minimum necessary disclosure.

4. Technologies for Ensuring Data Integrity

Several technologies provide assurance that data remains authentic and unaltered:

Hashing: Unique digital fingerprints detect tampering.

Digital signatures: Validate sender identity and ensure message authenticity.

Immutable logs: Write-once storage prevents retroactive manipulation.

Blockchain and DLT: Distributed consensus mechanisms ensure that no single party can alter records unilaterally.

Secure time-stamping: Provides indisputable chronology for transactions.

Executives should press for end-to-end traceability of data provenance.

5. Protecting Confidentiality Through Encryption

Encryption is the foundation of confidentiality.

At rest: Encrypt databases containing sensitive designs or pricing models.

In transit: Mandate TLS 1.3 for all B2B connections.

In use: Confidential computing enclaves (Intel SGX, AMD SEV) allow data to be processed securely in memory.

Tokenization: Replace sensitive fields (credit cards, customer IDs) with non-sensitive placeholders.

A “no plaintext anywhere” policy is becoming the new gold standard.

6. Emerging Approaches to Data Sharing

Executives should track innovative methods for secure collaboration:

Secure multiparty computation (MPC): Multiple parties compute results on shared data without revealing their individual inputs.

Homomorphic encryption: Enables computation on encrypted data without decryption.

Data clean rooms: Neutral, secure environments where multiple firms can pool data for analysis without raw data exposure.

Confidential AI: AI models trained on encrypted or anonymized data to prevent leakage of trade secrets.

These approaches balance data utility with privacy.

7. Intellectual Property (IP) Protection in Supply Chains

One of the most sensitive forms of supply chain data is intellectual property.

Design leaks: Competitors can copy products before launch.

Formula theft: Food and pharma industries are particularly vulnerable.

Supplier disclosures: Sharing CAD drawings or specifications introduces risk.

Executives must enforce:

Strong IP protection clauses in supplier contracts.

Access controls limiting IP to trusted roles.

Digital watermarking to detect unauthorized redistribution.

Protecting IP is a strategic imperative.

8. Case Example: Pharmaceutical Supply Chain

A global pharmaceutical firm discovered counterfeit drugs entering markets after hackers manipulated supplier invoices and production schedules.

Remediation steps:

Adopted blockchain-based serialization of every drug unit.

Enforced digital signatures on all supplier documents.

Deployed AI anomaly detection to flag suspicious orders.

Result: improved data integrity across the supply chain, preventing fakes from reaching patients.

9. Human and Process Controls

Technology alone is insufficient. Integrity and confidentiality require human vigilance.

Role-based access: Employees only see data relevant to their function.

Audit trails: Every data change logged with user attribution.

Supplier audits: Verify not only cyber practices but also data handling protocols.

Employee training: Raise awareness about confidentiality, phishing, and insider risk.

Executives must demand accountability at every level.

10. The Executive Lens

Why does this matter at the top table?

Trust equals competitiveness: Firms with better data integrity gain preferential contracts.

Regulatory compliance: GDPR, HIPAA, and industry-specific regulations mandate confidentiality.

Risk management: Integrity failures cascade into operational crises.

Investor assurance: Markets increasingly value data stewardship as a governance indicator.

For executives, data integrity is not IT housekeeping, it’s a board-level trust issue.

Executive Takeaways from Part 6

Data integrity and confidentiality are the currency of trust in supply chains.

Attackers target APIs, EDI feeds, IoT sensors, and insider leaks.

Integrity tools: hashing, digital signatures, immutable logs, blockchain.

Confidentiality tools: encryption, tokenization, confidential computing.

Emerging approaches: MPC, homomorphic encryption, clean rooms, confidential AI.

Intellectual property requires special safeguards.

Technology must be paired with human and contractual controls.

Executives should treat data stewardship as a strategic differentiator.

Looking Ahead

In Part 7: The Human Factor, we’ll turn from technology to people, exploring how social engineering, insider threats, and cultural gaps can compromise even the most well-designed systems, and what leaders must do to build a truly cyber-aware workforce.

Call to Action: Download the full guide to gain in-depth insights and practical frameworks that will help you lead the transformation towards a resilient supply chain.

The post Securing the Chain: Data Integrity and Confidentiality in a Shared Ecosystem appeared first on Logistics Viewpoints.

You may like

Non classé

Supply Chain and Logistics News February 23rd- 26th 2026

Published

24 heures agoon

27 février 2026By

This week’s supply chain landscape is defined by a massive push to bridge the gap between having data and actually using it. From the high-stakes legal battle over billion-dollar tariffs to a radical AI-driven workforce restructuring at WiseTech Global, the industry is moving past simple visibility toward a period of high-consequence execution. Whether it is the Supreme Court’s intervention in trade policy or the operationalization of decision intelligence showcased at the 30th Annual ARC Forum, the recurring theme is clear: the next competitive advantage belongs to those who can synchronize their technology, their inventory, and their legal strategies in real time. In this edition, we break down the four critical shifts—architectural, legal, operational, and structural—shaping the final days of February 2026.

Your News for the Week:

The Technology Gap: Why Supply Chain Execution Still Isn’t Fully Connected Yet

Richard Stewart of Infios argues that the primary technology gap in modern supply chain execution is not a lack of ambition or budget, but rather an architectural failure. Most existing systems, such as WMS and TMS, are designed to optimize within their own silos, leaving a critical disconnect during real-time disruptions where manual workarounds and spreadsheets are still required to coordinate responses. Citing the Supply Chain Execution Readiness Report, Richard highlights that 69% of leaders struggle with data quality and integration, driving a shift in buying criteria toward interoperability and real-time visibility. Ultimately, Richard suggests that the next competitive advantage will belong to organizations that move beyond simple visibility toward “connected execution,” prioritizing modular architectures that synchronize decisions across the entire operational landscape rather than just reporting on them.

FedEx sues the US Government, seeking a full refund over Trump Tariffs

FedEx has officially filed a lawsuit against the US government, seeking a full refund for duties paid under the Trump administration’s recent tariff policies. The move follows a landmark 6-3 Supreme Court ruling that found the president overstepped his authority by using emergency powers to bypass Congress’s sole power to levy taxes. While the court’s decision stopped the specific enforcement mechanism, it left the status of the estimated $175 billion already collected in limbo. As the first major carrier to seek reimbursement, FedEx’s legal challenge could set a precedent that could affect the logistics industry and thousands of other importers currently navigating a volatile trade environment.

From Hidden Inventory to Returns Recovery: Exposing Operational Blind Spots

Hiu Wai Loh sheds light on the hidden inventory crisis and the costly returns black hole that plagues supply chains long after peak season ends. The research reveals that a staggering number of organizations suffer from fragmented data, leading to false stockouts and millions of dollars trapped in reverse logistics limbo. To overcome these operational blind spots, the author argues that companies must tear down silos and adopt a unified, real-time inventory model. By leveraging AI-driven smart disposition, businesses can efficiently route returns to their most profitable next destination, transforming a traditional cost center into a powerful engine for full-price recovery and year-round agility.

Avantor and Aera Technology were present at the 30th Annual ARC Forum and presented on how they are operationalizing Decision Intelligence. They explore how modern supply chains are navigating the paradox of increasing global disruptions alongside record-breaking operational efficiency. By highlighting a case study from Avantor, the presentation demonstrated how Decision Intelligence (DI) can move beyond theoretical AI to automate thousands of routine daily decisions, such as stock rebalancing and purchase order prioritization. The key takeaway from the ARC Advisory Group’s 30th Leadership Forum is that companies should focus on “change-ready” solutions that solve immediate, high-impact problems rather than waiting for perfect data or fully autonomous systems.

WiseTech Global Cutting 30% of Workforce in AI restructure:

WiseTech Global, the developer of the CargoWise platform, has announced a major two-year restructuring plan that will involve cutting approximately 2,000 jobs, or 29% of its global workforce. This strategic pivot aims to integrate artificial intelligence deeper into both its internal operations and its customer-facing software, which currently handles a massive 75% of global customs transaction data. The layoffs are expected to hit the company’s U.S. cloud division, E2open, particularly hard, with some reports suggesting cuts of up to 50% there. This move comes at a turbulent time for the Australian tech giant, as it seeks to regain investor confidence following a 68% drop in share price since late 2024 amid leadership controversies and shifting market dynamics.

Song of the week:

The post Supply Chain and Logistics News February 23rd- 26th 2026 appeared first on Logistics Viewpoints.

Non classé

Burger King’s AI “Patty” Moves AI Into Frontline Execution

Published

2 jours agoon

26 février 2026By

Burger King is piloting an AI assistant called “Patty” inside employee headsets as part of its broader BK Assistant platform. This is not a marketing chatbot. It is an operational system embedded into restaurant execution.

Patty supports crew members with preparation guidance, monitors equipment status, and analyzes customer interactions for defined service language such as “please” and “thank you.” Managers can query performance metrics tied to service quality in real time.

The architecture matters more than the novelty.

AI Inside the Operational Core

Patty is integrated with a cloud based point of sale system. That connection allows:

near real time inventory updates across channels

equipment downtime alerts

synchronized digital menu adjustments

structured service quality measurement

If a product goes out of stock or a machine fails, availability can be updated across kiosks, drive through boards, and digital systems within minutes.

This is AI operating inside the transaction layer, not sitting above it.

Earlier fast food AI experiments focused on automated drive through ordering. Burger King is more measured there. The more consequential shift is internal execution intelligence.

Efficiency, Visibility, and Risk

Across retail and logistics sectors, AI agents are being embedded directly into workflows to standardize performance and compress response times. The value comes from integration and coordination, not conversational capability.

At the same time, customer sentiment toward fully automated service remains mixed. Privacy, workforce implications, and over automation risk are active concerns. As AI begins monitoring tone and behavior, governance becomes part of the deployment decision.

Operational AI improves visibility. It also expands accountability.

Implications for Supply Chain and Operations Leaders

Three themes emerge:

Execution instrumentation – AI is now measuring soft metrics and converting them into structured operational data.

Closed loop response – When connected to POS and inventory systems, AI can both detect issues and trigger corrective updates.

Governance at scale – Embedding AI at the edge requires clear oversight, performance auditability, and workforce alignment.

Burger King plans to expand BK Assistant across U.S. restaurants by the end of 2026, with Patty currently piloting in several hundred locations.

This is not a fast food curiosity. It is a signal.

AI is moving from analytics to execution. From dashboards to headsets. From advisory tools to operational participants.

For supply chain leaders, the question is no longer whether AI will enter frontline operations. The question is how intentionally it will be architected and governed once it does.

The post Burger King’s AI “Patty” Moves AI Into Frontline Execution appeared first on Logistics Viewpoints.

Non classé

AI and Enterprise Software: Is the “SaaSpocalypse” Narrative Overstated?

Published

2 jours agoon

26 février 2026By

Capital is rotating. Growth has given way to value, and within technology the divergence is increasingly pronounced. While broad indices have stabilized, many software names have not. Since late 2025, software equities have materially underperformed other parts of the technology complex. Forward revenue growth across many mid-cap SaaS firms has slowed from prior expansion levels, net retention rates have edged down in several categories, and valuation multiples have compressed accordingly. Markets are repricing both growth durability and margin structure.

The prevailing explanation is straightforward. Generative AI lowers barriers to entry, reduces the cost of building applications, and compresses differentiation. If application logic becomes easier to produce, competitive intensity increases and pricing power weakens. The result is visible not only in equity valuations, but in moderated expansion rates and tighter forward guidance. There is substance behind that concern. But reducing enterprise software economics to code production misses where the structural leverage in these platforms actually resides.

The Core Bear Case

The bearish thesis rests on three related propositions: AI commoditizes application logic, accelerates competitive entry, and pressures margins. If enterprises can generate software dynamically, recurring subscription models face structural pressure. If workflows can be automated through agents, reliance on fixed applications may decline. If code becomes less scarce, incumbents may struggle to defend premium multiples.

The repricing in software reflects these risks. Multiples have compressed meaningfully, and growth expectations have moderated across several verticals. In certain categories, retention softness suggests substitution pressure is already emerging. These signals should not be dismissed as temporary volatility.

At the same time, equating software value solely with feature output or code generation is a simplification. Enterprise software durability rarely rests on feature sets alone.

What Enterprise Software Actually Represents

In supply chain environments, systems function as operational coordination layers rather than isolated applications. Transportation management systems, warehouse platforms, planning suites, and multi-enterprise visibility networks sit at the center of integrated transaction flows. They embed years of configuration, exception handling logic, compliance mappings, and cross-functional workflows. Over time, they accumulate operational data that informs sourcing, forecasting, transportation optimization, and execution decisions across the enterprise.

Replacing those systems is not equivalent to generating new code. It requires rebuilding institutional memory, re-establishing integration points, and re-validating compliance controls across internal and external stakeholders. The switching cost is not interface retraining; it is operational re-architecture.

In our research on AI system design in supply chains

AI in the Supply Chain-sp

, the recurring conclusion is that structural advantage stems from coordination, persistent context, and integration density. Model capability matters. Economic durability flows from how systems connect and govern activity across distributed networks. That distinction is central to evaluating enterprise software in the current environment.

Where Risk Is Real

Not all software categories have equivalent structural protection. Risk is most evident in narrowly defined vertical tools, lightweight workflow utilities, and productivity-layer applications with limited proprietary data accumulation. In these segments, generative models can replicate core functionality with relatively low switching friction. Pricing pressure can intensify quickly, and margin compression may prove structural rather than cyclical.

By contrast, enterprise workflow orchestration platforms deeply embedded in core business processes create operational dependency. Replacing them requires redesigning process architecture, not simply substituting interfaces. Systems that accumulate years of transaction data, customization layers, and ecosystem integrations generate switching costs that extend beyond feature parity. Observability and monitoring platforms that collect continuous telemetry function as operational infrastructure; as AI agents proliferate, the need for measurement, traceability, and governance increases rather than declines.

In supply chain software specifically, planning platforms and transportation orchestration systems accumulate integration density over time. That density represents economic friction against displacement and reinforces durability when market volatility increases.

AI as Architectural Pressure

AI will alter software economics. It will increase development intensity, shorten product cycles, and compress margins in commoditized segments. Vendors operating at the surface layer of functionality will face sustained pressure.

However, AI simultaneously increases coordination complexity. As autonomous agents proliferate, enterprises require more governance controls, more integration layers, and more persistent contextual memory. The economic question shifts from “Who can build features fastest?” to “Who can coordinate distributed intelligence most reliably?”

Agent-to-agent communication, contextual memory frameworks, retrieval-based reasoning, and graph-aware modeling are becoming foundational design considerations in supply chain environments, as described in ARC’s white paper AI in the Supply Chain: Architecting the Future of Logistics. Vendors capable of governing these interactions at scale may strengthen their structural position. Vendors confined to interface-layer differentiation may see pricing pressure intensify. The outcome is not uniform decline; it is structural differentiation within the sector.

Valuation vs. Structural Impairment

Markets reprice sectors quickly when uncertainty rises. The current adjustment reflects legitimate concerns: slower growth trajectories, reduced retention durability, increased competitive intensity, and rising research and development requirements. These are measurable economic factors.

The open question is whether valuations reflect permanent impairment across enterprise software broadly, or whether the market is failing to distinguish between commoditized applications and structurally embedded coordination platforms.

Some observers argue that AI may ultimately expand the addressable market for enterprise systems rather than compress it. As AI adoption increases, enterprises may require additional orchestration frameworks, governance layers, and system-level controls. In that scenario, platforms with embedded workflows and distribution reach could see increased strategic relevance. The impact will vary materially by category and architectural depth.

In supply chain markets, complexity is not declining. Cross-border regulation is tightening, network volatility remains elevated, and multi-enterprise coordination is becoming more demanding. Economic value accrues to platforms that integrate and govern transactions, not to those that merely present information.

Implications for Enterprise Buyers

For supply chain leaders, the relevant issue is not short-term equity performance but architectural positioning. Does the platform function as a system of record embedded in transaction flows, or as a reporting layer adjacent to them? How deeply is it integrated into compliance processes, procurement logic, and transportation execution? Does it accumulate proprietary operational data that reinforces switching costs over time? Is it evolving toward coordinated AI architectures, or layering assistive tools onto a static foundation?

AI will not eliminate enterprise systems. It will expose those whose economic value rests primarily on surface functionality rather than integration depth.

A Measured Conclusion

The current narrative captures real pressure within segments of the software sector, but it does not fully account for structural differentiation. Certain categories face sustained pricing compression where differentiation is shallow and switching friction is low. Others may strengthen as AI increases coordination demands, governance requirements, and integration complexity.

The decisive factor will not be branding or feature velocity. It will be integration density, data gravity, and the ability to coordinate distributed intelligence across enterprise and partner networks. In supply chain contexts, platforms that govern transactions, maintain contextual continuity, and orchestrate multi-node operations retain structural advantage. Platforms that merely automate isolated tasks face a more uncertain economic trajectory.

That distinction, rather than headline narrative, will determine long-term outcomes.

_______________________________________________________________________________

Download the Full Architecture Framework

A2A is only one component of a broader intelligent supply chain architecture. For a structured analysis of how A2A integrates with context-aware systems, retrieval frameworks, graph-based reasoning, and data harmonization requirements, download the full white paper:

The paper outlines the architectural model, governance considerations, and practical implementation path for enterprises building connected intelligence across their supply networks.

Download the white paper to explore the complete framework.

The post AI and Enterprise Software: Is the “SaaSpocalypse” Narrative Overstated? appeared first on Logistics Viewpoints.

Supply Chain and Logistics News February 23rd- 26th 2026

Burger King’s AI “Patty” Moves AI Into Frontline Execution

AI and Enterprise Software: Is the “SaaSpocalypse” Narrative Overstated?

Walmart and the New Supply Chain Reality: AI, Automation, and Resilience

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

13 Books Logistics And Supply Chain Experts Need To Read

Trending

-

Non classé12 mois ago

Non classé12 mois agoWalmart and the New Supply Chain Reality: AI, Automation, and Resilience

- Non classé4 mois ago

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

- Non classé7 mois ago

13 Books Logistics And Supply Chain Experts Need To Read

- Non classé1 mois ago

Container Shipping Overcapacity & Rate Outlook 2026

- Non classé4 mois ago

Ocean rates climb – for now – on GRIs despite demand slump; Red Sea return coming soon? – November 11, 2025 Update

- Non classé1 an ago

Unlocking Digital Efficiency in Logistics – Data Standards and Integration

-

Non classé6 mois ago

Non classé6 mois agoBlue Yonder Acquires Optoro to Revolutionize Returns Management

-

Non classé5 mois ago

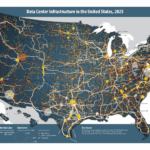

Non classé5 mois agoNavigating the Energy Demands of AI: How Data Center Growth Is Transforming Utility Planning and Power Infrastructure