Non classé

Supply Chain and Logistics News March 23rd-26th 2026

Published

11 heures agoon

By

This week in logistics and supply chain news, the industry sees a major shift in industrial software with the launch of Velotic, a standalone company integrating powerhouse platforms like Proficy, Kepware, and ThingWorx. The landscape further evolves as Walmart secures AI patents for real-time pricing and demand forecasting, while Crusoe and Redwood Materials scale their modular AI data center partnership in Nevada. Rounding out the updates, a modernized EU-US trade deal restores structured access for steel line pipe, and the USPS announces a temporary 8% rate hike for select domestic services starting in late April.

Your top Supply Chain and Logistics News for the Week:

Velotic announced its launch as an independent industrial software company, bringing together multiple established platforms to support evolving industrial and manufacturing requirements. The formation of Velotic coincides with the closing of TPG’s previously announced acquisitions of Proficy, the former manufacturing software business of GE Vernova, and PTC’s former industrial connectivity and Internet of Things (IoT) businesses.

According to Craig Resnick, Vice President, ARC Advisory Group, “The industrial software market is entering a pivotal moment. Manufacturers are under pressure to modernize operations, extract greater value from data, and rapidly adopt AI—without sacrificing reliability, safety, or control. Against this backdrop, the formation of Velotic as a new standalone industrial software company bringing together Proficy®, Kepware® and ThingWorx® represents more than a corporate restructuring. It signals a shift in how industrial data, analytics, and operations technology (OT) can be delivered at scale, that ARC strongly advocates.”

Walmart AI Pricing Patents Signal Shift Toward Real-Time Retail Execution

Walmart has secured two patents related to automated pricing and demand forecasting, drawing attention to how large retailers are evolving their pricing and execution capabilities. One patent, System and Method for Dynamically Updating Prices on an E-Commerce Platform, covers a system that can dynamically update online prices based on changing market conditions. A second, Walmart Pricing and Demand Forecasting Patent Classification, relates to demand forecasting technology designed to estimate what customers will buy and recommend pricing accordingly. At the same time, Walmart is expanding digital shelf labels across its U.S. stores, replacing paper labels with centrally managed electronic displays.

Individually, none of these elements are new. Retailers have long used forecasting models, pricing tools, and store execution processes. What is notable is the combination.

Walmart now has three capabilities aligned:

Demand forecasting tied to predictive models

Price recommendation based on that demand

Store-level infrastructure capable of rapid execution

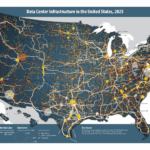

Crusoe and Redwood Materials Expand Strategic Partnership

On March 24, 2026, Crusoe, an AI infrastructure company, and Redwood Materials, a leader in battery recycling and energy storage, announced a major expansion of their existing partnership. The move scales their joint operations in Sparks, Nevada, to seven times the original AI infrastructure density, providing a blueprint for how second-life batteries can power high-performance computing. The expansion follows a successful pilot program launched in June 2025. Initially, the project utilized four Crusoe Spark™ modular data centers. Following seven months of high performance, the companies are increasing the deployment to 24 modular data centers. This growth is made possible by the hardware’s “modular” nature. Unlike traditional data centers that require years of stationary construction, modular units can be manufactured off-site and deployed in months.

EU Parliament Approves Key Terms of US Trade Deal

The newly approved EU–US line pipe agreement updates the terms under which European steel line pipe can enter the U.S. market, reinstating duty‑free access under a revised tariff‑rate quota system. Under the deal, the U.S. will allow a defined volume of EU‑produced line pipe to enter without Section 232 duties, while volumes exceeding the quota remain subject to tariffs. The agreement also includes strengthened verification requirements intended to prevent transshipment of line pipe originating from non‑EU countries—particularly China—through Europe. By formalizing these updated quota levels and compliance rules, the two sides have effectively modernized an earlier arrangement that had lapsed, restoring a structured, more predictable framework for EU steelmakers and U.S. importers.

USPS Sets 8% Temporary Rate Hike for Select Domestic Products

The U.S. Postal Service has approved a temporary rate increase for its Ground Advantage and Parcel Select services, raising prices for shippers during the peak spring and summer mailing period. The adjustment, which requires approval from the Postal Regulatory Commission, is structured as a seasonal surcharge designed to help USPS manage higher operating costs while maintaining service performance. Under the proposal, rates for Ground Advantage parcels would rise modestly across weight and distance tiers, while Parcel Select—often used by high‑volume shippers and consolidators—would see increases targeted at heavier packages and longer delivery zones. The temporary pricing would take effect April 28 and remain in place through July 13, after which rates revert to prior levels.

Song of the week:

The post Supply Chain and Logistics News March 23rd-26th 2026 appeared first on Logistics Viewpoints.

You may like

Non classé

Why Most RAG Systems Fail Before Generation Begins: The Missing Retrieval Validation Layer

Published

10 heures agoon

27 mars 2026By

Most RAG systems fail not on generation, but on unvalidated retrieval. Agentic RAG introduces a control loop that improves decision quality in multi-source environments.

Most retrieval-augmented generation (RAG) implementations do not fail at the model layer. They fail earlier, when systems proceed without validating whether retrieved information is sufficient.

In supply chain environments, where decisions depend on fragmented data across planning systems, execution platforms, and external signals, this limitation becomes operationally significant.

This is a structural issue, not a model performance issue.

Where Standard RAG Breaks Down

A conventional RAG architecture is linear. A query is embedded, relevant documents are retrieved from a vector database, and a language model generates a response. This works well when the question is clear and the knowledge base is well organized.

The limitations emerge under more realistic conditions:

Ambiguous queries are taken at face value, with no attempt to clarify intent

Answers distributed across multiple sources are only partially retrieved

Retrieval results that appear relevant but are incomplete or outdated are treated as sufficient

In each case, the system proceeds without validating whether the inputs are adequate. The model generates an answer regardless of the quality of the retrieval step.

In a supply chain context, this can translate directly into poor decisions. A system may retrieve an outdated tariff rule, incomplete supplier performance data, or a partial inventory position and still produce a confident recommendation.

The failure mode is not visible until the decision is already made.

From Pipeline to Loop

Agentic RAG introduces a control loop into this process.

Instead of a single pass from query to answer, the system evaluates intermediate results and can take corrective action. The sequence becomes:

Retrieve

Evaluate relevance and completeness

Decide whether to proceed or refine

Retrieve again if necessary

Generate response

This introduces decision points that were previously absent. The language model is no longer limited to generation. It can also act, selecting tools, reformulating queries, and routing across sources.

The architectural change is modest in concept but significant in effect. It converts retrieval from a one-shot operation into an iterative process with feedback.

This aligns with how advanced supply chain systems evolve, from static planning runs toward continuous, feedback-driven control processes.

Three Functional Capabilities

Agentic RAG systems typically introduce three capabilities that directly address the known failure modes.

Query refinement allows the system to rewrite or decompose ambiguous inputs before retrieval. This improves alignment between user intent and search results.

Routing and tool selection allow the system to query multiple sources. In supply chain environments, this is critical. A single question may require access to ERP data, transportation events, supplier records, and external regulatory sources.

Self-evaluation introduces a checkpoint between retrieval and generation. The system assesses whether the retrieved content is relevant, complete, and current. If not, it retries.

These functions are not independent features. Together, they form the control logic that governs the loop.

Supply Chain Use Cases

The value of this approach becomes clearer in multi-source, decision-heavy workflows.

Trade compliance

Determining import requirements may require combining tariff schedules, product classifications, and country-specific regulations. A single retrieval pass is often insufficient.

Supplier risk assessment

Evaluating a supplier may involve financial data, historical delivery performance, geopolitical exposure, and contract terms. These signals are rarely co-located.

Inventory and fulfillment decisions

Answering a seemingly simple question like “Can we fulfill this order?” may require checking available inventory, inbound shipments, allocation rules, and transportation constraints across systems.

In each case, the ability to evaluate and retry retrieval materially improves decision quality.

Trade-Offs Are Material

The addition of a control loop is not free.

Latency increases with each iteration. A simple query that would resolve in one pass may now require multiple retrieval and evaluation cycles.

Cost scales with the number of model calls. Systems operating at enterprise query volumes can see a meaningful increase in token consumption.

Determinism declines. Because the agent can make different decisions at each step, the same query may produce different paths and outputs across runs. This complicates debugging and validation.

There is also a structural limitation. The evaluation step itself relies on a language model. The system is effectively using one probabilistic model to judge the output of another.

These constraints directly affect production viability.

Where Agentic RAG Fits

Agentic RAG is not a universal upgrade. It is a targeted architectural choice.

It is appropriate when:

Queries are ambiguous or multi-step

Information is distributed across multiple systems

Decision quality is more important than latency

It is less appropriate when:

Queries are simple and repetitive

The knowledge base is clean and centralized

Response time and cost are tightly constrained

A hybrid model is likely to emerge as the standard approach. Standard RAG handles high-volume, low-complexity queries. Agentic RAG is invoked selectively when the system detects ambiguity or low retrieval confidence.

This mirrors how supply chain systems separate routine execution from exception-driven processes.

What This Means for Deployment

For supply chain leaders and technology providers, the implication is practical:

Do not introduce agentic loops to compensate for poor data or weak retrieval design

Apply agentic RAG selectively to high-value, multi-source decision workflows

Maintain simpler architectures for high-volume operational queries

Treat evaluation and retry logic as part of system design, not model tuning

In most cases, improving data quality and retrieval structure will deliver more value than adding additional reasoning layers.

Closing Perspective

The shift from pipeline to loop is a broader pattern in AI system design.

Static architectures assume that inputs are sufficient. Control-based architectures assume that they are not, and build mechanisms to test and correct them.

Agentic RAG applies this principle to retrieval.

The value is not in the agent itself. It is in the decision points introduced between retrieval and generation. Those checkpoints determine whether the system proceeds, retries, or escalates.

The implication is straightforward.

Agentic RAG should be treated as a targeted control mechanism, not a default architecture.

Apply it where decisions depend on fragmented, multi-source information and the cost of error is high. Avoid it where speed, predictability, and scale dominate.

The distinction is not technical. It is operational. Organizations that apply it selectively will improve decision quality. Those that apply it broadly risk adding cost and complexity without measurable gain.

The post Why Most RAG Systems Fail Before Generation Begins: The Missing Retrieval Validation Layer appeared first on Logistics Viewpoints.

Non classé

Amazon Tests Structured Delivery Windows as It Repositions Speed

Published

1 jour agoon

26 mars 2026By

Amazon is testing a delivery model that divides the day into ten delivery windows across a 24-hour period. This follows recent efforts around sub-hour delivery and a proposed one-hour “rush” pickup model using stores such as Whole Foods Market.

The direction is straightforward: delivery speed is being segmented and potentially priced, rather than treated as a single standard.

From Uniform Speed to Tiered Service

The delivery window model introduces structured choice:

Customers select defined delivery windows

Faster or narrower windows may carry higher cost

Broader windows allow for lower-cost fulfillment

This allows Amazon to shape demand instead of only responding to it.

Operational Impact

The focus is control over network flow rather than absolute speed. With defined windows, Amazon can:

Improve route density

Reduce peak congestion

Align delivery timing with available capacity

The proposed “rush” pickup model extends this into physical locations. By combining online inventory with store stock, stores function as local fulfillment nodes.

Competitive Context

Walmart continues to expand store-based fulfillment and drone delivery. The competitive focus remains:

Proximity to demand

Flexibility in fulfillment options

Cost to serve at different service levels

Amazon’s approach emphasizes range of options rather than a single fastest promise.

Economic Model

This structure creates a clearer link between service level and cost. As supply chains become more dynamic, companies are aligning service commitments with operational constraints and capacity . Delivery windows apply that logic to the last mile.

Implications

If this model scales:

Speed becomes a selectable service level

Customer choice influences network efficiency

Pricing can be used to balance demand and capacity

The change is practical. The objective is not simply faster delivery, but more controlled execution of it.

The post Amazon Tests Structured Delivery Windows as It Repositions Speed appeared first on Logistics Viewpoints.

Non classé

NVIDIA and the Role of AI Infrastructure in Supply Chains

Published

1 jour agoon

26 mars 2026By

NVIDIA is not a supply chain software provider. It is part of the infrastructure layer now supporting how supply chain decisions are made.

As AI moves from isolated use cases into core operations, compute and runtime environments become part of system design. NVIDIA’s role sits at that layer.

Infrastructure, not applications

NVIDIA provides the underlying components used to build and run AI systems:

GPU hardware for model training and inference

CUDA and supporting libraries

Enterprise AI deployment software

Simulation platforms such as Omniverse

These are used by software vendors and enterprises. They are not supply chain applications themselves.

From isolated models to concurrent workloads

Earlier AI deployments in supply chains were limited to specific functions. Forecasting, routing, and warehouse automation were typically deployed independently.

With access to scalable compute, multiple models can now run in parallel and update outputs more frequently. This supports:

Continuous forecast updates

Real-time routing adjustments

Computer vision in warehouse operations

Network-level scenario modeling

The change is not the use case. It is the ability to operate them together and at higher frequency.

Planning is no longer periodic

Traditional systems operate in cycles. Data is collected, plans are generated, and execution follows. AI systems supported by GPU infrastructure operate on shorter loops.

Forecasts are updated as new data arrives

Transportation decisions adjust during execution

Inventory positions shift as conditions change

Exceptions are identified earlier

This reduces the time between signal and response.

Simulation as a planning tool

Simulation has been used in supply chains for years, but often with limited scope. GPU-based environments allow more detailed models:

Warehouse layout and flow

Distribution network scenarios

Equipment and automation performance

Platforms such as Omniverse support these use cases. The objective is to evaluate decisions before deployment.

Multi-system coordination

As AI expands across functions, coordination becomes a constraint.

Running multiple models simultaneously requires:

Sufficient compute capacity

Low-latency processing

Integration across systems

NVIDIA’s platforms are commonly used in environments where these conditions are required.

Why this matters

Supply chains are operating with higher variability across demand, supply, and cost.

Systems designed for stable conditions are less effective in this environment.

AI-based approaches increase the frequency and scope of decision-making. That depends on infrastructure capable of supporting continuous model execution.

Implications

The primary question is not whether to adopt AI, but how it is supported. This includes:

Compute availability for training and inference

Data integration across systems

Ability to run models continuously

Use of simulation in planning

AI deployment in supply chains is increasingly tied to infrastructure decisions.

The shift underway is practical. Companies are working through how to run models more frequently, connect systems more effectively, and make decisions with less delay. The enabling technologies are becoming clearer, and the path forward is less about experimentation and more about execution.

The post NVIDIA and the Role of AI Infrastructure in Supply Chains appeared first on Logistics Viewpoints.

Why Most RAG Systems Fail Before Generation Begins: The Missing Retrieval Validation Layer

Supply Chain and Logistics News March 23rd-26th 2026

Amazon Tests Structured Delivery Windows as It Repositions Speed

Walmart and the New Supply Chain Reality: AI, Automation, and Resilience

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

13 Books Logistics And Supply Chain Experts Need To Read

Trending

-

Non classé1 an ago

Non classé1 an agoWalmart and the New Supply Chain Reality: AI, Automation, and Resilience

- Non classé5 mois ago

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

- Non classé7 mois ago

13 Books Logistics And Supply Chain Experts Need To Read

- Non classé2 mois ago

Container Shipping Overcapacity & Rate Outlook 2026

- Non classé5 mois ago

Ocean rates climb – for now – on GRIs despite demand slump; Red Sea return coming soon? – November 11, 2025 Update

- Non classé1 mois ago

Ocean rates ease as LNY begins; US port call fees again? – February 17, 2026 Update

- Non classé1 an ago

Unlocking Digital Efficiency in Logistics – Data Standards and Integration

-

Non classé6 mois ago

Non classé6 mois agoNavigating the Energy Demands of AI: How Data Center Growth Is Transforming Utility Planning and Power Infrastructure