Non classé

OpenAI and AWS Forge $38B Alliance, Microsoft Exclusivity Ends, New Multi-Cloud AI Compute Era Begins

Published

6 mois agoon

By

OpenAI has entered into a multi-year, $38 billion agreement with Amazon Web Services, formally ending its exclusive reliance on Microsoft Azure for cloud infrastructure. The deal, announced today, represents a fundamental realignment in the cloud compute ecosystem supporting advanced AI workloads.

Under the agreement, OpenAI will immediately begin running large-scale training and inference operations on AWS, gaining access to hundreds of thousands of NVIDIA GPUs hosted on Amazon EC2 UltraServers, along with the ability to scale across tens of millions of CPUs over the next several years.

“Scaling frontier AI requires massive, reliable compute,” said Sam Altman, OpenAI’s CEO. “Our partnership with AWS strengthens the broad compute ecosystem that will power this next era.”

A Structural Shift Toward Multi-Cloud AI

This marks the first formal infrastructure partnership between OpenAI and AWS. Since 2019, Microsoft has provided the primary compute backbone for OpenAI, anchored by a $13 billion investment and multi-year Azure commitment. That exclusivity expired earlier this year, opening the door to a multi-provider model.

AWS now becomes OpenAI’s largest secondary partner, joining smaller agreements already in place with Google Cloud and Oracle, and positioning itself as a co-equal pillar in OpenAI’s global compute strategy.

“AWS brings both scale and maturity to AI infrastructure,” noted Matt Garman, AWS CEO. “This agreement demonstrates why AWS is uniquely positioned to support OpenAI’s demanding AI workloads.”

Infrastructure Scope and Deployment

The deployment will include clusters of NVIDIA GB200 and GB300 GPUs linked through UltraServer nodes engineered for low-latency, high-bandwidth interconnects. The architecture supports both model training and large-scale inference, applications such as ChatGPT, Codex, and next-generation multimodal systems.

AWS has already begun allocating capacity, with full deployment expected by late 2026. The framework also includes options for expansion into 2027 and beyond, giving OpenAI flexibility as model complexity and usage continue to grow.

Continued Microsoft Collaboration

Despite the AWS deal, OpenAI maintains its strategic and financial relationship with Microsoft, including a separate $250 billion incremental commitment to Azure. The move reflects a deliberate multi-cloud posture, a strategy increasingly favored by large-scale AI developers seeking to balance cost, access to specialized chips, and platform resiliency.

Implications for Supply Chain and Infrastructure Leaders

This announcement underscores several macro-trends relevant to logistics and industrial technology executives:

AI Infrastructure Is Becoming a Supply Chain of Its Own

Cloud capacity, GPUs, and networking fabric are now constrained global commodities. Long-term compute contracts mirror procurement models traditionally seen in manufacturing or energy, locking in scarce resources ahead of demand.

Multi-Cloud Neutrality Reduces Vendor Lock-In

The shift toward multiple cloud providers parallels how diversified sourcing reduces single-supplier risk. Expect enterprise buyers to apply similar logic when procuring AI infrastructure and software services.

Operational AI at Scale Requires Cross-Vendor Interoperability

As companies like OpenAI distribute workloads across ecosystems, interoperability standards, ranging from APIs to data-plane orchestration, will become critical for continuity, performance, and governance.

CapEx Discipline Returns to the Forefront

With multi-year AI compute deals now exceeding $1.4 trillion in aggregate commitments across the sector, CFOs and CIOs are under pressure to evaluate utilization efficiency and long-term ROI of their AI infrastructure spend.

Broader Market Context

AWS’s win follows similar capacity expansions with Anthropic and Stability AI, but this partnership represents its highest-profile AI infrastructure engagement to date. It also signals that OpenAI intends to maintain independence in its technical roadmap, balancing strategic investors with diversified operational suppliers.

The timing is notable: OpenAI recently restructured its governance model to simplify corporate oversight, a move analysts interpret as preparation for a potential IPO that could value the company near $1 trillion.

AWS stock rose approximately 5 percent following the announcement, reflecting investor confidence in the long-term demand for AI-class compute.

Outlook

For the logistics and manufacturing sectors, the implications extend beyond software. The same GPU-based data centers that train language models are also powering digital twins, simulation models, and optimization engines increasingly embedded in supply chain planning.

As hyperscalers compete for AI workloads, enterprises should expect faster innovation in distributed computing, lower latency connectivity, and new pay-as-you-go models designed for AI-intensive industrial applications.

Summary

The $38 billion OpenAI–AWS partnership marks a decisive end to Microsoft’s exclusivity and a broader normalization of multi-cloud AI ecosystems.

For technology and supply-chain leaders, it serves as a reminder: compute itself has become a strategic resource, one that must now be sourced, diversified, and managed with the same rigor once reserved for physical inventory.

The post OpenAI and AWS Forge $38B Alliance, Microsoft Exclusivity Ends, New Multi-Cloud AI Compute Era Begins appeared first on Logistics Viewpoints.

You may like

Non classé

The Home Depot Buys SIMPL Automation to Speed Fulfillment and Tighten DC Performance

Published

2 jours agoon

17 avril 2026By

The deal signals a continued push to use automation, AI, and denser storage design to improve delivery speed, labor efficiency, and product availability.

The Home Depot has acquired SIMPL Automation, a Massachusetts-based provider of warehouse automation and technology systems, as the retailer continues to invest in faster, more efficient fulfillment operations.

The move follows a pilot at Home Depot’s Locust Grove, Georgia distribution center. According to the company, the pilot improved pick speed, shortened cycle times, and reduced product touches. SIMPL also brings a patented storage and retrieval solution designed to increase storage density inside the distribution center. That should help Home Depot position more high-demand inventory closer to the customer and support faster delivery.

“We’re focused on providing the best interconnected experience in home improvement by having products in stock and ready to deliver to our customers whether it’s to the home or jobsite,” said Amit Kalra, senior vice president of supply chain at The Home Depot. “By bringing SIMPL’s industry-leading automation into our operations, we’re accelerating the flow of products through our distribution network to deliver with unprecedented speed and precision.”

The strategic logic is straightforward. Retailers are under continued pressure to improve service levels while also protecting margins. That makes distribution center automation more than a labor story. It is now tied directly to throughput, storage utilization, inventory positioning, and delivery performance.

Home Depot framed the acquisition as part of a broader supply chain innovation agenda that includes AI-powered inventory management, advanced analytics, mobile technology, automation, and live delivery tracking. SIMPL fits neatly into that effort. Its value is not just in automating tasks, but in improving the overall flow of goods through the network.

This matters because fulfillment speed is increasingly determined inside the four walls. Faster picks, fewer touches, and denser storage can materially improve network responsiveness without requiring entirely new infrastructure. In that sense, the acquisition is not just about mechanization. It is about tighter execution.

There is also a second point worth noting. Home Depot is acquiring a capability it already tested in its own environment. That lowers adoption risk and suggests this was not a speculative technology purchase. It was an operationally validated one.

For supply chain leaders, this is another sign that warehouse automation is becoming a more central part of retail network strategy. The winners will not simply automate for its own sake. They will deploy automation where it improves flow, reduces friction, and helps place the right inventory closer to demand.

The post The Home Depot Buys SIMPL Automation to Speed Fulfillment and Tighten DC Performance appeared first on Logistics Viewpoints.

Non classé

Strait of Hormuz Reopens to Commercial Shipping, but Risk to Global Trade Remains

Published

2 jours agoon

17 avril 2026By

Iran says commercial traffic can resume through the Strait of Hormuz during the 10-day Lebanon ceasefire, sending oil prices sharply lower. But with U.S. pressure on Iranian shipping still in place and shipowners seeking operational clarity, this is a partial reopening, not a return to normal.

Iran said Friday that the Strait of Hormuz is open to commercial shipping for the duration of the current ceasefire, a move that immediately eased market fears over one of the world’s most important energy chokepoints.

Oil prices fell sharply on the news. The market response was rational: even a temporary reopening of Hormuz reduces the near-term risk of a sustained disruption to crude and LNG flows.

But supply chain leaders should be careful not to read this as full normalization.

President Donald Trump said commercial passage is open, while also stating that the U.S. naval blockade on Iranian ships and ports will remain in force until a broader agreement is reached. That leaves a meaningful contradiction in place. Merchant traffic may resume, but the broader security and enforcement environment remains unsettled.

That uncertainty is showing up quickly in shipping behavior. Carriers and shipowners are still looking for details on routing, mine risk, and practical transit conditions before treating the corridor as fully operational. Iran has indicated that vessels will need to follow coordinated routes, which suggests controlled passage rather than a clean restoration of normal maritime traffic.

There is also internal ambiguity in Iran’s messaging. Outlets tied to the IRGC criticized the foreign minister’s statement as incomplete, arguing that open commercial passage cannot be viewed in isolation while U.S. pressure on Iranian shipping continues. That matters because inconsistent signaling raises risk for carriers, insurers, and cargo owners trying to assess whether this is a stable operating environment or a temporary political pause.

For logistics and supply chain executives, the core point is straightforward: the immediate shock risk has eased, but corridor risk has not disappeared.

Hormuz is not just an oil story. It is a systemwide trade artery. Any disruption, or even the credible threat of disruption, can affect tanker availability, marine insurance costs, vessel scheduling, fuel assumptions, and downstream manufacturing economics. Friday’s drop in oil prices reflects relief. It does not yet reflect restored certainty.

The next question is whether commercial transits resume at scale and without incident. If they do, energy markets may continue to retrace. If routing restrictions, mine concerns, or military signaling reintroduce hesitation, volatility will return quickly.

The post Strait of Hormuz Reopens to Commercial Shipping, but Risk to Global Trade Remains appeared first on Logistics Viewpoints.

Non classé

Why Enterprise AI Systems Fail: It’s Not RAG – It’s Context Control

Published

2 jours agoon

17 avril 2026By

Enterprise AI systems are not failing because of poor retrieval or weak models. They are failing because they cannot control what actually enters the model’s context window.

The Pattern Is Becoming Familiar

Enterprise teams are following a familiar path with AI. They build a retrieval-augmented generation pipeline, connect internal data, tune prompts, and get early results that look promising. For a while, the system appears to work. Then performance starts to slip. Responses become less consistent. Important details fall out. The system loses continuity across turns. What looked sharp in a demo begins to feel unreliable in practice.

This is usually blamed on retrieval. In many cases, that diagnosis is wrong.

The Breakdown Comes After Retrieval

RAG solves an important problem. It helps a system find relevant documents and ground responses in enterprise data. But it does not determine what happens after retrieval. That is where many systems begin to fail.

In production, the model is not dealing with one clean document and one neatly phrased request. It is dealing with overlapping retrieved materials, accumulated conversation history, fixed token limits, and source content of uneven quality. At that point, the issue is no longer whether the system found something relevant. The issue is what actually makes it into the model, what gets left out, and how the remaining context is organized.

Most enterprise systems do not manage this step very well. They simply keep passing information forward until the context window starts to strain. When that happens, the model does not fail gracefully. It becomes selective in ways the enterprise did not intend. Relevant constraints disappear. Redundant information crowds out useful information. Continuity weakens. The answers can still sound polished, but they stop holding up operationally.

What This Looks Like on the Ground

This shows up quickly in supply chain settings. A planning assistant may retrieve the right demand and inventory signals, but lose a constraint that was discussed earlier in the interaction. The answer still looks reasonable, but it is no longer actionable. A procurement copilot may surface supplier information, yet carry forward redundant materials while excluding the one contract clause that mattered. A control tower assistant may retrieve prior exceptions, shipment updates, and current alerts, but present too much information with too little prioritization. In each case, retrieval technically worked. The system still failed.

The Missing Control Layer

The missing layer is the one between retrieval and prompting. There needs to be an explicit control step that determines what stays, what gets removed, what gets compressed, and how the available space is allocated. This is not prompt engineering, and it is not simply retrieval tuning. It is context control.

That control layer includes several practical functions. Retrieved materials often need to be re-ranked because not every document deserves equal weight. Conversation history needs to be filtered because not every prior interaction should remain active in the model’s working set. Relevant content often needs to be compressed so that it fits within system constraints without losing meaning. And above all, token budgets need to be treated as an architectural issue, not just a technical limitation.

Memory Usually Fails First

Memory is often where the problem becomes visible first. Many systems handle multi-turn interaction with a simple sliding window. They keep the last few turns and discard the rest. That sounds reasonable until an older but still important piece of context disappears while a newer but less useful interaction remains. Stronger systems do not rely on blunt recency alone. They apply weighted retention so that important context persists longer, low-value context fades, and relevance to the current task matters more than simple position in the conversation. Without that, continuity breaks down quickly.

Token Limits Are Not a Side Issue

Token budgets are often treated as a background technical constraint. In practice, they shape system behavior. If priorities are not explicit, the system will make implicit tradeoffs under pressure. Some architectures handle this more effectively by reserving space in a disciplined order: first the system prompt, then filtered memory, then retrieved content compressed to fit what remains. That sounds like a small design choice, but it prevents a surprising number of failure modes.

Why This Matters in Supply Chains

This matters more in supply chains than in many other domains because supply chain work is rarely a single-turn exercise. It is multi-step, multi-system, and time-dependent. AI systems must maintain continuity across decisions, exceptions, and changing conditions. That requires structured context, not just access to data. This aligns with the broader shift toward context-aware AI architectures in supply chains, where continuity and memory are foundational to performance .

In many environments, this failure mode is already present. It just has not been isolated yet. Teams see inconsistent outputs and assume the problem is the model, the prompt, or the retriever. Often the deeper issue is that the model is seeing the wrong mix of context.

This Problem Gets Bigger From Here

That issue will become more important, not less, as enterprise architectures evolve. Agent-based systems need shared context. Persistent memory layers increase the volume of available information. Graph-based reasoning expands the number of relationships a system may need to consider. All of that increases pressure on context selection. None of it removes the problem.

The Real Takeaway

The central point is straightforward. RAG gets the right documents. Prompting shapes the response. Context control determines whether the system works at all.

Most teams are still focused on the first two. In many enterprise deployments today, the third is already where systems are breaking.

The post Why Enterprise AI Systems Fail: It’s Not RAG – It’s Context Control appeared first on Logistics Viewpoints.

The Home Depot Buys SIMPL Automation to Speed Fulfillment and Tighten DC Performance

Strait of Hormuz Reopens to Commercial Shipping, but Risk to Global Trade Remains

Why Enterprise AI Systems Fail: It’s Not RAG – It’s Context Control

Walmart and the New Supply Chain Reality: AI, Automation, and Resilience

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

13 Books Logistics And Supply Chain Experts Need To Read

Trending

-

Non classé1 an ago

Non classé1 an agoWalmart and the New Supply Chain Reality: AI, Automation, and Resilience

- Non classé6 mois ago

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

- Non classé8 mois ago

13 Books Logistics And Supply Chain Experts Need To Read

- Non classé3 mois ago

Container Shipping Overcapacity & Rate Outlook 2026

- Non classé5 mois ago

Ocean rates climb – for now – on GRIs despite demand slump; Red Sea return coming soon? – November 11, 2025 Update

- Non classé2 mois ago

Ocean rates ease as LNY begins; US port call fees again? – February 17, 2026 Update

- Non classé1 an ago

Unlocking Digital Efficiency in Logistics – Data Standards and Integration

-

Non classé6 mois ago

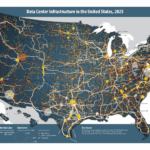

Non classé6 mois agoNavigating the Energy Demands of AI: How Data Center Growth Is Transforming Utility Planning and Power Infrastructure