Non classé

The Critical Role of Provenance in Cybersecurity and Supply Chains

Published

11 mois agoon

By

The Power Inverter Kill Switch Story Underlines The Importance Of Provenance in Cybersecurity and the Supply Chain

Do you really know what your production assets contain?

If you’ve ever bought antiques, you’re probably familiar with the concept of provenance. I have relatives that own a dresser that was gifted from George Washington to a family friend when he was a lieutenant in the colonial army. How do we know this? Because of the authenticated documentation that came with the dresser proving its origin. This is provenance – proving and documenting where something came from, what it contains, and the path it took before it wound up in your possession.

Heavy assets in industrial automation are a lot more complex than antiques, and the stakes are a lot higher, as we saw recently with the story about cellular powered kill switches found in Chinese manufactured power inverters used in solar and wind farms. In addition to being used around the world for renewable power applications, these inverters are also used in batteries, heat pumps, EV chargers, and other assets.

It’s typical for these products to have remote access capabilities, but these connections are normally handled through firewalls. You may have read the story about Chinese manufactured cranes that have remote connectivity capabilities but are largely unsecured. Many end users were not even aware of these remote communication capabilities, or if they were, they were improperly secured. If your assets come with features and functions that present a potential cybersecurity risk to your enterprise and you don’t address it or are not aware of it even though it is documented, that’s ultimately your responsibility, not the vendor’s.

The Problem of Rogue Components

It’s not always obvious what all the components are in an asset, be they hardware or software. The more complex the asset, the more complicated the issue becomes. In the case of the power inverters, the communication devices were undocumented, and asset owners did not even know they were there. The devices were found by a US-based team of experts whose job was to strip these assets down and identify their components. According to the Reuters article referenced in the above link, the “rogue components provide additional, undocumented communication channels that could allow firewalls to be circumvented remotely, with potentially catastrophic consequences.”

What is Provenance in Cybersecurity?

In the world of cybersecurity, provenance is more than just the source of origin. According to NIST, provenance is “The chronology of the origin, development, ownership, location, and changes to a system or system component and associated data. It may also include personnel and processes used to interact with or make modifications to the system, component, or associated data.” So, it’s more than just where the product came from, it includes all the associated data about what the asset or “component” contains from both a hardware and software standpoint.

Large Power Transformers In a Storage Yard: Source: IEEE SpectrumSBOMs: What’s in Your Software?

The concept of software bills of materials (SBOM) has emerged as an important element of cybersecurity. In simple terms it contains the details and supply chain relationships of various components used in building software. Those who produce, purchase, and operate software use it to improve their understanding of what components are in the systems. This in turn has multiple benefits, most notably the potential to track known and newly emerged vulnerabilities and risks. This concept applies to all systems, including those used for manufacturing operations and control.

SBOMs are becoming increasingly mandated in new regulations across a wide range of industries. Thee White House’s 2021 Executive Order on Improving the Nation’s Cybersecurity mandated that federal agencies receive SBOMs for software they purchase. The EU’s Cyber Resilience Act (CRA) requires manufacturers of digital products sold in the EU to produce a top-level SBOM.

HBOMs: What’s in Your Hardware?

Unfortunately, SBOMs don’t do much to identify the various hardware components in an asset or system and where they come from. For that, you need an HBOM or hardware bill of materials, which should provide a detailed inventory of the hardware components included in an asset or system. CISA has its own Hardware Bill of Materials Framework for Supply Chain risk Management that you can review here and download.

HBOMs are relevant to any hardware asset, from a DCS controller or a field device like a pressure transmitter all the way up to large transformers. The larger and more complex the asset is, the more important it is to have a complete HBOM and SBOM. Take the example of large power transformers (LPTs), which again are largely sourced from China, are often custom built, and contain many hardware and software components. Many times, we don’t even know what’s in these large assets until we completely tear them down. A Chinese power transformer was sent to Sandia National Laboratory (SNL) for inspection in 2020, but even those results are classified.

End Users Need to Take Supply Chain Cybersecurity Seriously

SBOMs and HBOMs are all part of the larger issue of supply chain cybersecurity. Compiling an accurate inventory of installed systems has long been considered as one of the first steps in a cybersecurity program. Simply identifying such assets is no longer sufficient. Potential supply chain related risks can only be addressed if the provenance of all components in those assets is known. When assessing or procuring software systems or hardware it is very important to ask the supplier to list the components in the product. This may take the form of a software or hardware bill of material, but such a formal presentation may not be necessary. If the supplier is unwilling or unable to provide this information, then this should be considered when making buying choices.

Other aspects of supply chain cybersecurity include evaluating the cybersecurity posture of your software and service partners. The importance of this was shown in the SolarWinds attack. End users are increasingly reliant on their technology and service partners to keep things running, but if your partners have poor cyber resilience, it can and will directly affect your operations at some point.

The US National Institute of Standards and Technology (NIST) provides guidance for supply chain cybersecurity in the form of a special publication titled “Cybersecurity Supply Chain Risk Management Practices for Systems and Organizations.” This document describes how to identify, assess, and respond to cybersecurity risks throughout the supply chain at all levels of an organization. It offers key practices for organizations to adopt as they develop their capability to manage cybersecurity risks within and across their supply chains.

The post The Critical Role of Provenance in Cybersecurity and Supply Chains appeared first on Logistics Viewpoints.

You may like

Non classé

Supply Chain Interoperability Is Becoming the Foundation for AI-Enabled Logistics

Published

3 heures agoon

6 mai 2026By

As AI moves from pilots to operational execution, the limiting factor is often not the model. It is whether enterprise systems, logistics partners, data layers, and execution workflows can interoperate in real time.

Supply chain interoperability used to be treated as an integration problem. Could the transportation management system exchange data with the warehouse management system? Could the ERP send orders to a supplier portal? Could a logistics provider transmit shipment status updates back to a customer through EDI?

Those questions still matter. But they no longer define the full challenge.

The next phase of supply chain technology is being shaped by AI-enabled execution, real-time logistics visibility, autonomous exception management, and cross-enterprise decision orchestration. In that environment, interoperability is no longer just about getting one system to send data to another. It is about whether the supply chain can operate as a connected decision network.

That distinction matters. A company can have modern applications, cloud platforms, visibility tools, and AI pilots, yet still be constrained by fragmented data, brittle interfaces, inconsistent master data, and slow operational handoffs. The result is a familiar pattern: better dashboards, more alerts, and more analytics, but not enough improvement in the speed or quality of execution.

AI does not eliminate that problem. In many cases, it exposes it.

From Systems Integration to Operational Interoperability

For years, supply chain integration was largely about connectivity. Companies invested in EDI, middleware, application programming interfaces, and enterprise integration platforms to move data among ERP, TMS, WMS, order management, procurement, and visibility systems.

That work created an important foundation. But connectivity and interoperability are not the same thing.

Connectivity means systems can exchange data. Interoperability means they can exchange data in ways that are timely, trusted, contextual, and operationally useful. A shipment update that arrives six hours late may be connected, but it is not very useful for dynamic exception management. A carrier status message that lacks standardized location, timestamp, or shipment reference data may technically move across systems, but it does not support reliable automation.

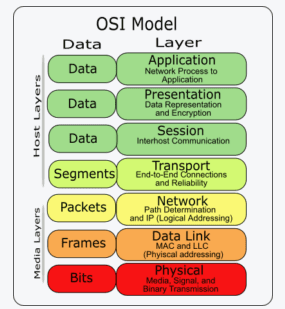

This is why interoperability has become a higher-order requirement. Modern supply chains need systems that can do more than pass messages. They need to preserve meaning across platforms, partners, workflows, and decision layers. The earlier Logistics Viewpoints articles, Supply Chain Interoperability: A Layered Framework for Integrating Modern Logistics Systems, and The Next Phase of Supply Chain Interoperability: APIs, AI, and the Rise of Digital Supply Networks framed this issue through the OSI model. That framework remains useful, but the market has moved toward a more urgent question: can interoperable systems support AI-enabled execution?

A transportation delay, for example, is not just a transportation event. It may affect inventory availability, production scheduling, labor planning, customer commitments, and financial exposure. If those domains are not interoperable, the organization sees the issue in pieces. Transportation sees a late load. Inventory sees a possible stockout. Customer service sees a service risk. Finance may not see the cost implication until later.

The business problem is not simply that the data exists in separate systems. The problem is that the organization cannot reason across those systems fast enough.

The OSI Model Still Offers a Useful Lens

One helpful way to understand the problem is to borrow from the OSI model, the seven-layer networking framework originally designed to explain how computer systems communicate.

The OSI model was not created for logistics. But as a metaphor, it remains useful because it reminds supply chain leaders that interoperability is layered. Failure at one layer can undermine performance at every layer above it.

At the physical layer, supply chains depend on trucks, vessels, containers, pallets, warehouses, conveyors, sensors, robots, and handheld devices. If assets cannot generate reliable operational signals, the digital layer begins with incomplete visibility.

At the local communication layer, facilities rely on RFID, scanners, machine controls, warehouse automation systems, yard systems, and IoT devices. If these technologies cannot communicate consistently inside a warehouse, plant, port, or distribution center, local execution becomes fragmented.

At the network layer, information must move across suppliers, manufacturers, carriers, logistics service providers, brokers, ports, customs agencies, and customers. This is where APIs, EDI, event streams, and logistics networks become critical.

At the transport and session layers, the concern shifts from data movement to reliability and coordination. Did the message arrive? Was it complete? Is the receiving system able to reconcile it with the right order, shipment, customer, SKU, or inventory position? Can systems maintain continuity across a long-running operational process?

At the presentation layer, data standardization becomes essential. One system’s “delivery appointment” may not match another system’s “planned arrival.” Location names, units of measure, shipment identifiers, product hierarchies, and exception codes may vary across systems. Without translation and normalization, automation breaks down.

At the application layer, users interact with portals, dashboards, planning workbenches, supplier platforms, control towers, and AI assistants. If the underlying layers are inconsistent, the application layer becomes a polished interface over fragmented reality.

This is where many supply chain technology programs stall. The user-facing system improves, but the underlying interoperability problem remains unresolved.

Why AI Raises the Stakes

AI changes the interoperability discussion because AI depends on context.

Traditional supply chain applications can often tolerate imperfect integration. A planner can interpret missing fields, reconcile conflicting records, call a carrier, or manually override a planning recommendation. That is inefficient, but it is workable.

AI-enabled systems have less tolerance for ambiguity. If an AI system is expected to recommend a transportation reroute, adjust inventory policy, escalate a customer risk, or trigger an exception workflow, it must understand the operational context with precision.

That requires interoperable data across multiple domains.

A shipment agent may need to know where a load is, whether the delay is material, which orders are affected, what inventory is available at alternate nodes, which customers have service-level commitments, which carriers have capacity, and what cost or margin tradeoffs are acceptable. This cannot be solved by a single model. It requires a connected data and process architecture.

This is why the move from AI pilots to AI execution is so difficult. A pilot can be built around a narrow dataset and a bounded use case. Operational AI must function across messy enterprise systems, partner networks, exception workflows, security rules, and governance requirements. This is also the architectural argument developed in AI in the Supply Chain: Architecting the Future of Logistics with A2A, MCP, and Graph-Enhanced Reasoning, which frames AI not as a bolt-on feature but as a connected intelligence layer across modern logistics systems.

The model may be impressive. The deployment may still fail if the interoperability layer is weak.

APIs, EDI, and Event Streams Each Have a Role

The future is not simply “APIs replace EDI.” That is too simplistic.

EDI remains deeply embedded in supply chain operations, especially in order management, transportation tendering, invoicing, advance shipment notices, and retail compliance. It is reliable, standardized in many contexts, and widely adopted across trading partners.

But EDI is often batch-oriented and rigid. It was designed for structured transaction exchange, not continuous operational sensing or real-time decision orchestration.

APIs add flexibility. They allow systems to request or update information in near real time, supporting more responsive workflows across TMS, WMS, ERP, supplier portals, and visibility platforms. APIs are especially important when applications need to exchange dynamic information, such as shipment status, carrier capacity, inventory availability, or order changes.

Event streams add another layer. In an event-driven architecture, systems publish and consume operational events as they occur. A shipment is delayed. A dock appointment changes. A container clears customs. A temperature excursion occurs. A forecast changes. These events can trigger downstream workflows, analytics, alerts, or AI recommendations.

For AI-enabled logistics, event-driven interoperability is especially important. AI systems need current signals. They also need to understand which events matter, how they relate to other events, and what actions should follow.

The architecture is therefore becoming more layered. EDI may continue to support structured transaction exchange. APIs may support real-time system-to-system interaction. Event streams may support continuous operational awareness. AI agents may sit above these layers, interpreting events, retrieving context, and recommending or initiating action.

Interoperability Is Also a Data Governance Problem

Many supply chain leaders still underestimate the governance dimension. Interoperability is not only about interfaces. It is also about shared meaning.

A supplier record must be consistent across procurement, planning, finance, risk management, and logistics. A product identifier must connect the commercial SKU, manufacturing item, warehouse item, and compliance classification. A location must be defined consistently across order management, transportation, inventory, and trade systems.

Without that foundation, AI systems will retrieve partial or conflicting context.

This is especially important for advanced architectures such as retrieval-augmented generation and graph-based reasoning. RAG can help AI systems retrieve relevant documents, policies, contracts, and operating procedures. Graph RAG can help AI reason across relationships among suppliers, products, shipments, facilities, customers, and risks. But these capabilities depend on the quality of the underlying data model.

A graph is only useful if the entities are resolved correctly. A retrieval layer is only reliable if the knowledge base is current, governed, and permissioned. An AI assistant is only trustworthy if it can distinguish between outdated policy, draft guidance, and approved operating procedure.

In other words, AI does not remove the need for disciplined data management. It raises the return on getting it right.

This is where the second ARC Advisory Group white paper, AI in the Supply Chain: From Architecture to Execution, becomes relevant. The next challenge is not simply designing AI architectures, but connecting them to operational workflows, owners, thresholds, escalation paths, and measurable execution outcomes.

The New Interoperability Test: Can the System Act?

The traditional test for interoperability was whether systems could exchange data.

The new test is whether the enterprise can act on that data quickly, consistently, and intelligently.

Consider a late inbound shipment. In a minimally connected environment, the carrier sends a status update. Someone sees the delay. A planner checks inventory. A customer service representative may be notified. A transportation manager may look for alternatives. The process is slow and human-mediated.

In a more interoperable environment, the delay becomes an operational event. The system links it to affected purchase orders, inventory positions, production schedules, customer orders, and service commitments. It calculates whether the delay matters. It identifies mitigation options. It may recommend expediting, rebalancing inventory, substituting supply, changing delivery commitments, or doing nothing because the risk is immaterial.

In an AI-enabled environment, that workflow can become increasingly autonomous. Specialized agents can monitor transportation, inventory, procurement, and customer impact. They can exchange context, evaluate tradeoffs, and escalate only when human judgment is required.

But that future depends on interoperability. Without it, AI remains trapped in functional silos.

Implications for Technology Suppliers

For technology suppliers, interoperability is becoming a competitive differentiator.

Vendors can no longer rely only on application depth within a single functional domain. A strong TMS, WMS, planning platform, or visibility solution must also fit into a broader execution architecture. Buyers increasingly want to know how a system connects, how it handles data semantics, how it supports event-driven workflows, and how it exposes context to analytics and AI layers.

This creates pressure on suppliers to support open APIs, robust integration frameworks, standardized data models, and partner ecosystems. It also raises the importance of explainability and auditability. As AI capabilities are embedded into supply chain applications, customers will need to understand not only what a system recommends, but what data, assumptions, and business rules shaped the recommendation.

The suppliers that win in this environment will not necessarily be those with the most impressive AI demo. They will be those that can operationalize AI inside the real architecture of enterprise supply chains.

That means connecting to legacy systems, preserving context, supporting governance, and enabling action across planning and execution workflows.

Implications for Enterprise Buyers

For enterprise buyers, the lesson is equally clear. AI strategy cannot be separated from interoperability strategy.

Before investing heavily in autonomous planning, AI-enabled control towers, intelligent transportation orchestration, or agentic workflows, companies should evaluate whether their data and systems can support those ambitions.

Several questions matter:

Can core entities such as products, suppliers, locations, orders, shipments, carriers, and customers be reconciled across systems?

Are critical operational events available in near real time?

Do systems share consistent definitions for status, exception severity, inventory availability, and service risk?

Can workflows cross functional boundaries, or do they still depend on email, spreadsheets, and manual escalation?

Is there a governed knowledge layer for policies, contracts, operating procedures, and compliance rules?

Can AI recommendations be traced back to source data and business logic?

These questions are less glamorous than AI strategy decks. But they are more predictive of whether AI will work in production.

From Digital Supply Chains to Decision Networks

The broader shift is from digital supply chains to decision networks.

A digital supply chain exchanges information electronically. A decision network uses interoperable data, applications, workflows, and AI systems to coordinate action across the enterprise and its partners.

That is the direction the market is moving. Visibility platforms are becoming more execution-aware. Planning systems are becoming more responsive to real-time signals. Transportation and warehouse systems are becoming more automated. AI assistants are being embedded into enterprise workflows. Supplier networks are becoming richer sources of operational intelligence.

The connective tissue among all of these developments is interoperability.

Without interoperability, each system improves locally. With interoperability, the network improves structurally.

Conclusion: Interoperability Is Now Strategic Infrastructure

Supply chain interoperability is no longer a back-office IT concern. It is becoming strategic infrastructure for AI-enabled logistics.

The companies that make progress will not be those that simply add AI features to disconnected systems. They will be those that build the digital foundations required for intelligent execution: clean data, shared semantics, real-time event flows, governed knowledge layers, open interfaces, and workflows that cross functional boundaries.

The OSI model remains useful because it reminds us that interoperability is layered. Physical assets, local devices, networks, data standards, system sessions, applications, and users all have to work together. But the business issue has moved beyond integration architecture.

The real question is whether the supply chain can sense, understand, decide, and act as a connected system.

That is the foundation for AI-enabled logistics. And for many organizations, it may be the most important technology work still ahead.

The post Supply Chain Interoperability Is Becoming the Foundation for AI-Enabled Logistics appeared first on Logistics Viewpoints.

The next phase of supply chain AI will be defined less by technical capability and more by measurable improvements in decision speed, service, inventory, resilience, and execution performance.

For the past several years, the supply chain AI conversation has focused primarily on capability. Could AI improve forecasting accuracy? Could it detect disruptions earlier? Could it summarize operational data, support planners and dispatchers, generate recommendations, coordinate agents, or retrieve institutional knowledge?

Those questions mattered because enterprises first needed to determine whether AI systems were technically viable inside complex supply chain environments. That phase is now ending. The market is moving into a far more demanding stage of adoption: execution.

Supply chain leaders are shifting from asking, “What can AI do?” to asking, “What operating outcomes can AI improve?” That distinction changes the conversation. Supply chains are not abstract information systems. They are physical operating networks governed by transportation capacity, inventory exposure, labor constraints, sourcing risk, customer commitments, service performance, and financial tradeoffs.

A transportation decision affects cost and delivery reliability. An inventory decision affects working capital and customer availability. A sourcing decision affects resilience and continuity. A fulfillment decision affects customer trust and operational stability. This is where supply chain AI becomes materially more difficult. Generating insight is no longer the primary challenge. Improving execution is.

The End of the Demonstration Phase

The first generation of enterprise AI deployments focused heavily on proving technical competence. Vendors demonstrated copilots that could summarize reports, answer operational questions, retrieve documents, generate recommendations, or automate portions of workflows. Visibility platforms introduced predictive alerts. Planning systems layered AI forecasting into existing environments. Transportation platforms added disruption prediction and recommendation engines.

Many of these advances were legitimate and important. But proving capability is not the same as improving operations.

An AI system may identify a disruption faster than a human planner. A visibility platform may detect inventory risk earlier. A generative AI assistant may recommend a transportation adjustment in seconds. None of those capabilities create meaningful value unless the organization can operationalize the response.

This is where many enterprise AI initiatives begin to stall. The model performs well, the pilot succeeds, and the demonstration generates enthusiasm. But the operating workflow itself does not materially change. Recommendations remain disconnected from execution systems. Escalations still move through email chains, spreadsheets, meetings, and fragmented approval structures. Decision ownership remains unclear across functions. Human teams continue coordinating sequentially instead of simultaneously.

The enterprise becomes more intelligent without becoming materially faster.

The Real Problem Is Decision Latency

Most large supply chains are not suffering from a lack of operational signals. Enterprises already possess dashboards, visibility layers, transportation data, planning systems, analytics platforms, and exception reporting environments capable of surfacing operational issues quickly. The larger issue is decision latency.

Decision latency is the gap between recognizing a changing condition and executing a coordinated operational response. That gap is becoming one of the defining weaknesses in modern supply chain operations.

Consider an inbound shipment delay on a high-volume SKU. The transportation team may see the delay first, but the inventory team may not immediately adjust allocation, the fulfillment team may continue promising orders against expected stock, and customer service may not receive updated commitment guidance until much later. By the time the organization responds, the issue has moved from a transportation exception to an inventory exposure and then to a customer service problem. That is decision latency in operational form.

A transportation disruption may be visible immediately, but inventory teams, logistics teams, procurement teams, and fulfillment operations still respond through fragmented escalation paths. A sourcing issue may be identified quickly, but operational coordination across the enterprise may take hours or days. A warehouse constraint may appear early, but fulfillment reprioritization and customer communication remain delayed.

Every handoff creates friction. Every silo slows response speed. Every disconnected workflow increases operational latency. In volatile supply chain environments, those delays become expensive quickly.

A delayed transportation response increases service risk. A delayed sourcing adjustment increases disruption exposure. A delayed inventory decision affects both working capital and customer availability. A delayed fulfillment response creates cascading operational consequences across the network.

This is why the market conversation is shifting away from demonstrations and toward execution architecture. The goal is no longer simply generating intelligence. The goal is compressing the time between signal and coordinated action.

Why Execution Becomes the Next Competitive Divide

The next phase of supply chain AI will separate the market more aggressively. Systems that generate insight will become common. Systems that operationalize intelligence across enterprise workflows will create disproportionate value.

That distinction is critical. A disruption alert matters only if it improves response quality. A forecast matters only if it improves inventory positioning or replenishment behavior. A recommendation matters only if it reaches the right workflow, owner, threshold, and execution system in time to change the outcome.

This is why supply chain AI increasingly depends on workflow integration, contextual reasoning, execution pathways, governance structures, and coordinated decision-making. The market is beginning to recognize that intelligence alone is insufficient. Operational coordination is becoming the new battleground.

The enterprises that outperform over the next decade will likely not be the organizations with the largest models or the most sophisticated demonstrations. They will be the organizations that reduce decision latency, improve coordination speed, and operationalize intelligence across planning, sourcing, transportation, fulfillment, and inventory management simultaneously.

That is the execution era now emerging across the supply chain industry. It represents a much larger shift than simply adding AI features to existing software platforms.

The post Supply Chain AI Enters the Execution Era appeared first on Logistics Viewpoints.

Non classé

Nuclear Power Is Becoming Part of the AI Infrastructure Supply Chain

Published

5 heures agoon

6 mai 2026By

AI data centers are turning electricity into a strategic supply chain constraint. Nuclear power is moving back into the infrastructure conversation, not as an abstract energy policy issue, but as a potential source of firm, large-scale power for data center growth, industrial electrification, and grid resilience.

AI Is Forcing a New Power Conversation

The AI buildout is making electricity a limiting factor.

For years, data center expansion was discussed mostly in terms of land, fiber, servers, chips, cooling, and cloud capacity. Power mattered, but it was often treated as something that could be solved through grid interconnection, renewable power purchase agreements, or utility planning.

That assumption is now under pressure.

AI workloads require dense, continuous, high-reliability power. A hyperscale AI campus is not just another commercial load. It can resemble a large industrial facility in its demand profile. Meta’s El Paso AI data center provides a useful marker. Meta has increased its planned investment in the site to more than $10 billion and is targeting roughly 1 gigawatt of capacity ahead of the facility’s projected 2028 opening.

That is the practical backdrop for the renewed nuclear discussion. AI is not only a software race. It is becoming an energy infrastructure race.

Why Nuclear Is Back on the Table

Nuclear power has several characteristics that matter to AI infrastructure: high capacity, low operating emissions, long asset life, and round-the-clock output. Those attributes are increasingly valuable in a grid environment strained by data centers, industrial electrification, manufacturing reshoring, and broader electricity demand growth.

The recent interest is not limited to traditional large reactors. Advanced nuclear designs, including small modular reactors and microreactors, are being positioned as possible sources of firm power for industrial sites, remote locations, and dedicated data center loads.

The important point is not that nuclear will quickly solve the AI power problem. It will not. Licensing, fuel supply, component manufacturing, construction execution, financing, and public acceptance remain real constraints.

The important point is that nuclear is moving from the edges of the discussion into the infrastructure planning process.

DOE and NRC Approvals Are Now Central to the Story

The U.S. Department of Energy and the Nuclear Regulatory Commission are central to whether advanced nuclear moves from concept to deployment.

The DOE has created a Reactor Pilot Program intended to accelerate advanced reactor demonstrations. The program’s stated goal is to use DOE demonstration authority to support at least three advanced nuclear reactor concepts located outside the national laboratories in reaching criticality by July 4, 2026.

That does not mean commercial deployment has been solved. DOE demonstration authority can accelerate research, development, and prototype deployment. It is not the same as broad commercial operation under NRC licensing. But it does create a faster pathway for selected advanced reactor developers to move from concept to physical systems.

The NRC is also evolving its licensing framework. Its Part 53 rulemaking is designed to create a risk-informed, technology-inclusive pathway for advanced reactors. This is intended to make licensing more adaptable to new reactor designs while maintaining safety oversight.

That combination—DOE demonstration acceleration and NRC licensing reform—is what makes advanced nuclear more relevant to current infrastructure planning.

TerraPower Shows the New Approval Cycle in Practice

TerraPower’s Natrium project in Kemmerer, Wyoming, is the clearest current example of this shift.

The NRC approved the construction permit for TerraPower’s planned Natrium reactor in March 2026. This is a meaningful milestone. It represents movement from concept to physical buildout. But it is not the final step. TerraPower still requires a separate operating license before the reactor can enter commercial service.

The project also illustrates one of the deeper supply chain issues: fuel. TerraPower’s design is expected to use high-assay low-enriched uranium (HALEU), a category where domestic supply is still developing. That introduces another layer of dependency into the nuclear supply chain.

This is the broader point. Nuclear is not a single technology problem. It is a multi-layer supply chain problem involving licensing, fuel, components, construction, and grid integration.

The Nuclear Supply Chain Is Narrow and Specialized

The nuclear buildout cannot be scaled like software.

A reactor project requires nuclear-grade components, qualified suppliers, specialized fabrication, safety documentation, heavy construction, long-lead electrical systems, regulatory inspections, project controls, and a trained workforce.

Key bottlenecks include:

Nuclear-grade valves, pumps, sensors, and control systems

Reactor vessels and heavy fabricated components

HALEU fuel availability for certain advanced designs

Grid interconnection and transmission capacity

Nuclear-certified engineering and construction labor

Site permitting and local approvals

Long-duration financing

Safety case development and regulatory review

These constraints do not disappear because AI demand is growing.

Data Centers Are Changing Utility Planning

Utilities are already adjusting capital plans around data center growth.

American Electric Power raised its five-year capital investment plan to $78 billion, citing surging electricity demand from data centers. The company also reported that most of its expected incremental load through 2030 is tied to data center development.

That is a significant shift. Data centers are no longer marginal loads. They are becoming central drivers of utility investment.

Nuclear fits into this conversation because AI data centers require firm power, not just annual renewable offsets. The constraint is not only total energy. It is reliable energy at specific times.

What This Means for Supply Chain Leaders

The direct takeaway is not that every company needs a nuclear strategy.

The practical takeaway is that energy availability is becoming a core network design variable.

Manufacturing plants, cold chain facilities, semiconductor fabs, battery plants, automated warehouses, and data centers are all competing for reliable electricity, electrical equipment, construction labor, and grid capacity.

In some regions, the question will not be whether a site has good transportation access. It will be whether the site can secure sufficient power within a required timeframe.

Supply chain network design will increasingly need to include:

Power availability

Grid reliability

Interconnection timelines

Regional utility investment plans

Exposure to data center load growth

Backup generation strategy

Energy cost volatility

This is a structural shift in how supply chains are planned.

Analyst Takeaway

The nuclear conversation is no longer separate from the AI infrastructure conversation.

DOE demonstration authority, the Reactor Pilot Program, NRC Part 53, TerraPower’s construction permit, and ongoing work on HALEU fuel supply all point in the same direction: the U.S. is trying to reduce friction around advanced nuclear development.

This does not eliminate execution risk. Nuclear remains capital-intensive, regulated, and complex. But AI has changed the demand side of the equation. The need for large-scale, reliable power is now acute enough that nuclear is being reconsidered as part of the industrial infrastructure stack.

The supply chain of AI begins with power. Nuclear may become one of the ways that power is secured.

The post Nuclear Power Is Becoming Part of the AI Infrastructure Supply Chain appeared first on Logistics Viewpoints.

Supply Chain Interoperability Is Becoming the Foundation for AI-Enabled Logistics

Supply Chain AI Enters the Execution Era

Nuclear Power Is Becoming Part of the AI Infrastructure Supply Chain

Walmart and the New Supply Chain Reality: AI, Automation, and Resilience

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

13 Books Logistics And Supply Chain Experts Need To Read

Trending

-

Non classé1 an ago

Non classé1 an agoWalmart and the New Supply Chain Reality: AI, Automation, and Resilience

- Non classé7 mois ago

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

- Non classé9 mois ago

13 Books Logistics And Supply Chain Experts Need To Read

- Non classé3 mois ago

Container Shipping Overcapacity & Rate Outlook 2026

- Non classé3 mois ago

Ocean rates ease as LNY begins; US port call fees again? – February 17, 2026 Update

- Non classé6 mois ago

Ocean rates climb – for now – on GRIs despite demand slump; Red Sea return coming soon? – November 11, 2025 Update

- Non classé1 an ago

Unlocking Digital Efficiency in Logistics – Data Standards and Integration

-

Non classé1 an ago

Non classé1 an agoAmazon and the Shift to AI-Driven Supply Chain Planning