Non classé

Supply Chain AI: 25 Current Use Cases (and a Handful of Future Ones)

Published

2 ans agoon

By

When it came out, ChatGPT seemed like magic. It has led supply chain vendors to discuss how they currently use artificial intelligence. Further, virtually every supplier of supply chain solutions is eager to explain the ongoing investments they are making in artificial intelligence.

Any device that can perceive its environment and can take actions that maximize its chance of success at some goal is engaged in some form of artificial intelligence. AI is not a new technology in the supply chain realm; it has been used in some cases for decades. More recently, many other cases have emerged.

Optimization is used in supply planning, factory scheduling, supply chain design, and transportation planning. In a broad sense, optimization refers to creating plans that help companies achieve service levels and other goals at the lowest cost. In mathematical terms, optimization is a mixed-integer or linear programming approach to finding the best combination of warehouses, factories, transportation flows, and other supply chain resources under real-world constraints.

Machine Learning occurs when a machine takes the output, observes its accuracy, and updates its model so that better outputs will occur. Demand planning engines have natural feedback loops that allow the forecast engine to learn. The forecast can be compared to what actually shipped or sold.

Since ML began being used in demand forecasting in the early 2000s, ML has helped greatly increase the breadth and depth of forecasting. Now, ML forecasting is not just monthly or quarterly; weekly and even daily forecasting is now possible. We have moved from product-level forecasts at a regional level to stock-keeping unit forecasts made at the store level. More recently, demand planning applications based on machine learning have improved forecasting by incorporating competitor pricing data, store traffic, and weather data.

We are no longer just forecasting demand but also when trucks and factory machinery are likely to break down (predictive maintenance), the optimal amount of inventory to hold and where it should be held (inventory optimization), and labor forecasting in the warehouse. This type of forecasting can forecast the number of employees required to perform estimated work down to the day, shift, job, and zone level. ML can also be used to generate labor standards for warehouse workers.

ML techniques like clustering, data similarity, and semantic tagging can automate master data management. Without accurate data, companies face the garbage in, garbage out problem.

In terms of supply planning, if key parameters (like supplier lead times) are no longer correct, then the planning becomes suboptimal. ML is being used to keep key parameters and policies up to date. It is also being used to predict whether an SKU believed to be in stock at a store is actually out of stock.

Supply chain risk solutions use ML and other forms of AI to predict which suppliers are included in a company’s multi-tier supply chain. This is becoming increasingly necessary as customs will hold up shipments at the port if it believes the shipment contains products made with slave labor from China, even if those components came from their supplier’s supplier’s supplier and represent a minuscule portion of the total cost of the product. Shippers’ end-to-end supply chain predictions are based on applying AI to OpenWeb searches, import/export records, data from sourcing platforms like ThomasNet, federal logistics records, and other data. These predictions accelerate a company’s ability to verify how its extended supply chain is constructed. Customs uses the same technology to determine which shipments should be denied entry.

Natural Language Processing is used to classify commodity classification for use in imports and exports and in real-time supply chain risk solutions.

The Harmonized System is a commodity classification coding taxonomy that forms the basis upon which all goods are identified for customs. It is used by customs authorities worldwide. Using the right product classification allows companies to pay the correct tariffs. Paying the right tariffs is necessary to avoid government fines and calculate the true landed cost of products. The problem is that there is an incredible gap between how products are described commercially and how they are expressed in the national customs tariff schedules. This has resulted in error rates as high as 30%. The combination of natural language processing and expert systems has been used to automate and significantly improve the classification process.

Real-time risk solutions also use natural language processing to read online publications and other data sources, make sense of what they read, contextualize the data into information, and report supply chain disruptions caused by weather, geopolitical events, and other hazards in near real-time. Every step in that value chain has search terms associated with it. The names of the suppliers, carriers, logistics service providers become search terms. Those search terms are paired with terms signaling a problem – those terms might be “bankruptcy,” “plant fire,” “port explosion,” “strike”, and many, many other terms. So, the term “Haiphong” when combined in an article with the phrase “port fire” would generate an alert.

Reinforcement Learning is a form of machine learning that lets AI models refine their decision-making process based on positive, neutral, and negative feedback. For example, if you want to train a vision system to recognize a dog’s image, you will start by using humans to look at tens of thousands of images of animals. The humans label the pictures as dog, not dog, or unclear. The computer is then presented with those images. The system would say, “this is a dog” or “this is not a dog” and it learns whether its conclusion was correct.

Drones use this form of AI to improve inventory accuracy in a warehouse. Reinforcement learning allows the drone to recognize warehouse racks, pallets, and cases and get close enough to inventory to scan the barcodes. Similarly, reinforcement learning has been applied to security camera footage in the warehouse to ensure workers are following standard operating procedures.

Simultaneous localization and mapping (SLAM) allows a vehicle to construct and update a map of an unknown environment while simultaneously keeping track of the vehicle’s location within it. This technology allows mobile robots to move autonomously through a warehouse.

Drones and autonomous mobile robots using SLAM are in an early adoption stage for last-mile deliveries. Autonomous trucks will revolutionize logistics.

Autonomous trucks are not yet feasible, but we are probably just a couple of years out from being able to transport goods from a distribution center to a retail facility autonomously.

Causal AI is a technique in artificial intelligence that builds a causal model and can make inferences using causality rather than just correlation. Cause-and-effect relationships in an extended supply chain can be an intricate web that is difficult to unravel, but these relationships govern business operations. A causal model graph represents a network of interconnected entities and relationships, enabling the system to understand how various factors influence each other to create an optimized outcome. By leveraging causal knowledge and data graphs, Causal AI can navigate complex business scenarios, anticipate outcomes, and recommend optimal courses of action. Georgia-Pacific has demonstrated an application of Causal AI to improve touchless commerce dramatically. The solution was used to detect and correct both common and uncommon order errors or discrepancies in near real-time.

GenerativeAI is the new kid on the block. GenAI can generate text, images, videos, or other data using generative models. Some warehouse management suppliers are exploring using GenAI to generate end-of-shift reports or talking points used at standup meetings at the beginning of a shift.

Several supply chain application vendors are investing in GenAI to improve their user interfaces. The idea is that a user will make a request, and the system will take them directly to the answer they seek. GenAI can also help interpret complex charts and planning outputs. If a planning system indicates that a plan shows high costs or an inability to achieve targeted service levels, GenAI can help explain the upstream constraints driving that outcome.

Planning vendors are also interested in using GenAI to solve the black box problem. The black box problem occurs when planners don’t understand how the planning engine produced the plan it did. If they don’t understand it, they don’t trust it, and they then produce a much less optimal plan using Excel.

In the longer term, GenAI will help some planning vendors generate autonomous plans. When disruptions constantly occur, there is no time to constantly create and analyze scenarios on how to react best. Autonomous planning can improve a company’s supply chain agility. However, it is worth noting that a few planning suppliers can already generate autonomous plans based on ML and attribute-based planning rather than having to rely on GenAI.

The post Supply Chain AI: 25 Current Use Cases (and a Handful of Future Ones) appeared first on Logistics Viewpoints.

You may like

The next phase of supply chain AI will be defined less by technical capability and more by measurable improvements in decision speed, service, inventory, resilience, and execution performance.

For the past several years, the supply chain AI conversation has focused primarily on capability. Could AI improve forecasting accuracy? Could it detect disruptions earlier? Could it summarize operational data, support planners and dispatchers, generate recommendations, coordinate agents, or retrieve institutional knowledge?

Those questions mattered because enterprises first needed to determine whether AI systems were technically viable inside complex supply chain environments. That phase is now ending. The market is moving into a far more demanding stage of adoption: execution.

Supply chain leaders are shifting from asking, “What can AI do?” to asking, “What operating outcomes can AI improve?” That distinction changes the conversation. Supply chains are not abstract information systems. They are physical operating networks governed by transportation capacity, inventory exposure, labor constraints, sourcing risk, customer commitments, service performance, and financial tradeoffs.

A transportation decision affects cost and delivery reliability. An inventory decision affects working capital and customer availability. A sourcing decision affects resilience and continuity. A fulfillment decision affects customer trust and operational stability. This is where supply chain AI becomes materially more difficult. Generating insight is no longer the primary challenge. Improving execution is.

The End of the Demonstration Phase

The first generation of enterprise AI deployments focused heavily on proving technical competence. Vendors demonstrated copilots that could summarize reports, answer operational questions, retrieve documents, generate recommendations, or automate portions of workflows. Visibility platforms introduced predictive alerts. Planning systems layered AI forecasting into existing environments. Transportation platforms added disruption prediction and recommendation engines.

Many of these advances were legitimate and important. But proving capability is not the same as improving operations.

An AI system may identify a disruption faster than a human planner. A visibility platform may detect inventory risk earlier. A generative AI assistant may recommend a transportation adjustment in seconds. None of those capabilities create meaningful value unless the organization can operationalize the response.

This is where many enterprise AI initiatives begin to stall. The model performs well, the pilot succeeds, and the demonstration generates enthusiasm. But the operating workflow itself does not materially change. Recommendations remain disconnected from execution systems. Escalations still move through email chains, spreadsheets, meetings, and fragmented approval structures. Decision ownership remains unclear across functions. Human teams continue coordinating sequentially instead of simultaneously.

The enterprise becomes more intelligent without becoming materially faster.

The Real Problem Is Decision Latency

Most large supply chains are not suffering from a lack of operational signals. Enterprises already possess dashboards, visibility layers, transportation data, planning systems, analytics platforms, and exception reporting environments capable of surfacing operational issues quickly. The larger issue is decision latency.

Decision latency is the gap between recognizing a changing condition and executing a coordinated operational response. That gap is becoming one of the defining weaknesses in modern supply chain operations.

Consider an inbound shipment delay on a high-volume SKU. The transportation team may see the delay first, but the inventory team may not immediately adjust allocation, the fulfillment team may continue promising orders against expected stock, and customer service may not receive updated commitment guidance until much later. By the time the organization responds, the issue has moved from a transportation exception to an inventory exposure and then to a customer service problem. That is decision latency in operational form.

A transportation disruption may be visible immediately, but inventory teams, logistics teams, procurement teams, and fulfillment operations still respond through fragmented escalation paths. A sourcing issue may be identified quickly, but operational coordination across the enterprise may take hours or days. A warehouse constraint may appear early, but fulfillment reprioritization and customer communication remain delayed.

Every handoff creates friction. Every silo slows response speed. Every disconnected workflow increases operational latency. In volatile supply chain environments, those delays become expensive quickly.

A delayed transportation response increases service risk. A delayed sourcing adjustment increases disruption exposure. A delayed inventory decision affects both working capital and customer availability. A delayed fulfillment response creates cascading operational consequences across the network.

This is why the market conversation is shifting away from demonstrations and toward execution architecture. The goal is no longer simply generating intelligence. The goal is compressing the time between signal and coordinated action.

Why Execution Becomes the Next Competitive Divide

The next phase of supply chain AI will separate the market more aggressively. Systems that generate insight will become common. Systems that operationalize intelligence across enterprise workflows will create disproportionate value.

That distinction is critical. A disruption alert matters only if it improves response quality. A forecast matters only if it improves inventory positioning or replenishment behavior. A recommendation matters only if it reaches the right workflow, owner, threshold, and execution system in time to change the outcome.

This is why supply chain AI increasingly depends on workflow integration, contextual reasoning, execution pathways, governance structures, and coordinated decision-making. The market is beginning to recognize that intelligence alone is insufficient. Operational coordination is becoming the new battleground.

The enterprises that outperform over the next decade will likely not be the organizations with the largest models or the most sophisticated demonstrations. They will be the organizations that reduce decision latency, improve coordination speed, and operationalize intelligence across planning, sourcing, transportation, fulfillment, and inventory management simultaneously.

That is the execution era now emerging across the supply chain industry. It represents a much larger shift than simply adding AI features to existing software platforms.

The post Supply Chain AI Enters the Execution Era appeared first on Logistics Viewpoints.

Non classé

Nuclear Power Is Becoming Part of the AI Infrastructure Supply Chain

Published

47 minutes agoon

6 mai 2026By

AI data centers are turning electricity into a strategic supply chain constraint. Nuclear power is moving back into the infrastructure conversation, not as an abstract energy policy issue, but as a potential source of firm, large-scale power for data center growth, industrial electrification, and grid resilience.

AI Is Forcing a New Power Conversation

The AI buildout is making electricity a limiting factor.

For years, data center expansion was discussed mostly in terms of land, fiber, servers, chips, cooling, and cloud capacity. Power mattered, but it was often treated as something that could be solved through grid interconnection, renewable power purchase agreements, or utility planning.

That assumption is now under pressure.

AI workloads require dense, continuous, high-reliability power. A hyperscale AI campus is not just another commercial load. It can resemble a large industrial facility in its demand profile. Meta’s El Paso AI data center provides a useful marker. Meta has increased its planned investment in the site to more than $10 billion and is targeting roughly 1 gigawatt of capacity ahead of the facility’s projected 2028 opening.

That is the practical backdrop for the renewed nuclear discussion. AI is not only a software race. It is becoming an energy infrastructure race.

Why Nuclear Is Back on the Table

Nuclear power has several characteristics that matter to AI infrastructure: high capacity, low operating emissions, long asset life, and round-the-clock output. Those attributes are increasingly valuable in a grid environment strained by data centers, industrial electrification, manufacturing reshoring, and broader electricity demand growth.

The recent interest is not limited to traditional large reactors. Advanced nuclear designs, including small modular reactors and microreactors, are being positioned as possible sources of firm power for industrial sites, remote locations, and dedicated data center loads.

The important point is not that nuclear will quickly solve the AI power problem. It will not. Licensing, fuel supply, component manufacturing, construction execution, financing, and public acceptance remain real constraints.

The important point is that nuclear is moving from the edges of the discussion into the infrastructure planning process.

DOE and NRC Approvals Are Now Central to the Story

The U.S. Department of Energy and the Nuclear Regulatory Commission are central to whether advanced nuclear moves from concept to deployment.

The DOE has created a Reactor Pilot Program intended to accelerate advanced reactor demonstrations. The program’s stated goal is to use DOE demonstration authority to support at least three advanced nuclear reactor concepts located outside the national laboratories in reaching criticality by July 4, 2026.

That does not mean commercial deployment has been solved. DOE demonstration authority can accelerate research, development, and prototype deployment. It is not the same as broad commercial operation under NRC licensing. But it does create a faster pathway for selected advanced reactor developers to move from concept to physical systems.

The NRC is also evolving its licensing framework. Its Part 53 rulemaking is designed to create a risk-informed, technology-inclusive pathway for advanced reactors. This is intended to make licensing more adaptable to new reactor designs while maintaining safety oversight.

That combination—DOE demonstration acceleration and NRC licensing reform—is what makes advanced nuclear more relevant to current infrastructure planning.

TerraPower Shows the New Approval Cycle in Practice

TerraPower’s Natrium project in Kemmerer, Wyoming, is the clearest current example of this shift.

The NRC approved the construction permit for TerraPower’s planned Natrium reactor in March 2026. This is a meaningful milestone. It represents movement from concept to physical buildout. But it is not the final step. TerraPower still requires a separate operating license before the reactor can enter commercial service.

The project also illustrates one of the deeper supply chain issues: fuel. TerraPower’s design is expected to use high-assay low-enriched uranium (HALEU), a category where domestic supply is still developing. That introduces another layer of dependency into the nuclear supply chain.

This is the broader point. Nuclear is not a single technology problem. It is a multi-layer supply chain problem involving licensing, fuel, components, construction, and grid integration.

The Nuclear Supply Chain Is Narrow and Specialized

The nuclear buildout cannot be scaled like software.

A reactor project requires nuclear-grade components, qualified suppliers, specialized fabrication, safety documentation, heavy construction, long-lead electrical systems, regulatory inspections, project controls, and a trained workforce.

Key bottlenecks include:

Nuclear-grade valves, pumps, sensors, and control systems

Reactor vessels and heavy fabricated components

HALEU fuel availability for certain advanced designs

Grid interconnection and transmission capacity

Nuclear-certified engineering and construction labor

Site permitting and local approvals

Long-duration financing

Safety case development and regulatory review

These constraints do not disappear because AI demand is growing.

Data Centers Are Changing Utility Planning

Utilities are already adjusting capital plans around data center growth.

American Electric Power raised its five-year capital investment plan to $78 billion, citing surging electricity demand from data centers. The company also reported that most of its expected incremental load through 2030 is tied to data center development.

That is a significant shift. Data centers are no longer marginal loads. They are becoming central drivers of utility investment.

Nuclear fits into this conversation because AI data centers require firm power, not just annual renewable offsets. The constraint is not only total energy. It is reliable energy at specific times.

What This Means for Supply Chain Leaders

The direct takeaway is not that every company needs a nuclear strategy.

The practical takeaway is that energy availability is becoming a core network design variable.

Manufacturing plants, cold chain facilities, semiconductor fabs, battery plants, automated warehouses, and data centers are all competing for reliable electricity, electrical equipment, construction labor, and grid capacity.

In some regions, the question will not be whether a site has good transportation access. It will be whether the site can secure sufficient power within a required timeframe.

Supply chain network design will increasingly need to include:

Power availability

Grid reliability

Interconnection timelines

Regional utility investment plans

Exposure to data center load growth

Backup generation strategy

Energy cost volatility

This is a structural shift in how supply chains are planned.

Analyst Takeaway

The nuclear conversation is no longer separate from the AI infrastructure conversation.

DOE demonstration authority, the Reactor Pilot Program, NRC Part 53, TerraPower’s construction permit, and ongoing work on HALEU fuel supply all point in the same direction: the U.S. is trying to reduce friction around advanced nuclear development.

This does not eliminate execution risk. Nuclear remains capital-intensive, regulated, and complex. But AI has changed the demand side of the equation. The need for large-scale, reliable power is now acute enough that nuclear is being reconsidered as part of the industrial infrastructure stack.

The supply chain of AI begins with power. Nuclear may become one of the ways that power is secured.

The post Nuclear Power Is Becoming Part of the AI Infrastructure Supply Chain appeared first on Logistics Viewpoints.

Non classé

Modern Cost Engineering Evolution: Rewiring the Human Element for Supply Chain Resilience

Published

18 heures agoon

5 mai 2026By

In my previous blog outlining the adoption of cost engineering, I explored the dynamics behind the market move away from sole reliance on traditional, backward-looking cost estimating to one that also incorporates modern “should-cost” methods. The reasons are many, of course, but it is clear that industrial organizations are keen to use AI-driven methods and other digital tools to build much stronger layers of resilience and competitive advantage necessary to compete in today’s hyperconnected economies.

Although digitally enabled results can sometimes be achieved in an operational vacuum, digital maturity cannot. The former can demonstrate benefits like efficiency, cost reduction, safety, etc., but it will rarely scale. The latter delivers market success via competitive excellence, providing a means for better organizing the business and orchestrating the ecosystem to anticipate and meet modern market signals.

Modernizing the supply chain is, at its core, a human-centered endeavor. The successful integration of cost engineering demands significant realignment and reskilling of people. As I began discussing almost a decade ago, the workforce transformation required to modernize is certainly the most difficult endeavor a business will face.

In this blog, I’ll dive into the human element of cost engineering. I’ll touch on how roles and attendant knowledge, skills, and abilities (KSAs) across the supply chain are evolving, discuss the cultural hurdles organizations must navigate, and outline how companies can transform traditional estimators into strategic consultants.

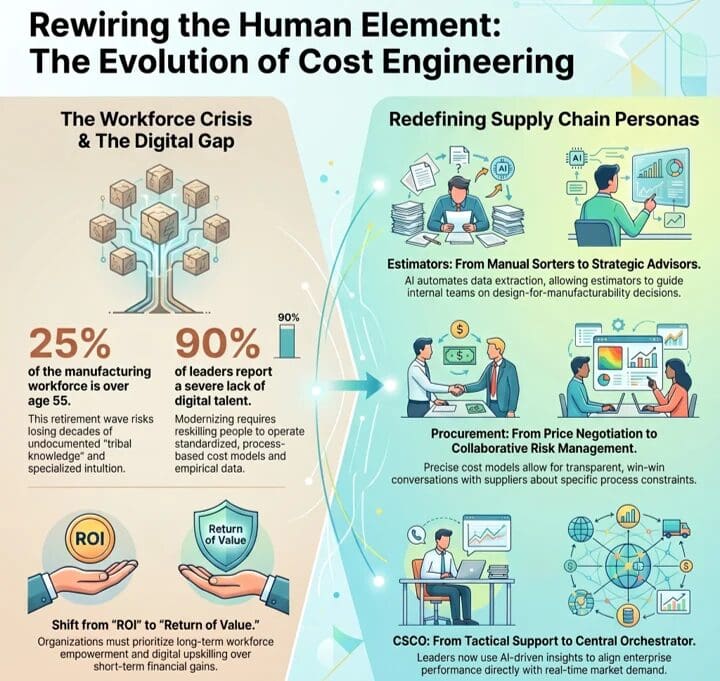

Tribal Knowledge: I Feel Like I’ve Been Here Before

Leadership must address the workforce crisis currently confronting industrial manufacturing. Look at any credible information resource and the numbers are basically the same. Whole industries are facing rapid workforce retirements, with approximately 25 percent of the total manufacturing workforce already over the age of 55. Within small and medium-sized enterprises, which form the bedrock of the industrial manufacturing supply base, particularly in North America, between 30 and 40 percent of business owners and skilled operational workers are nearing retirement age. Ouch.

And yet we’ve known this has been underway for quite some time, but here we are. Historically, the reaction to tribal knowledge was wariness. I recall many conversations with leadership and frontline workers as technologies such as machine learning were initially deployed. Tribal knowledge, expertise, and the workforce that owned it were often treated as a nut to be cracked and the insides taken. Initially, the shell was perceived to be obstinately hard, with workers guarding their critical expertise, including core intellectual property (IP), as a means of fending off obsolescence. It didn’t lend itself to, shall we say, everyone pulling in the same direction.

Supply chain was no exception to this pattern. Cost estimating relied heavily on the undocumented tribal knowledge and personal experience of veteran employees. As these experts exit the workforce, they take decades of specialized intuition with them, leaving organizations highly vulnerable.

As a result, a new discipline has taken hold, as tribal knowledge is likely to be unretrievable in many instances or, in situations where leaders show a lack of humility, downsized too quickly. Modern cost engineering takes aim squarely at the reliance on human memory with standardized, process-based cost models and empirical data. Yet, an overwhelming 90 percent of supply chain leaders report a severe lack of the digital talent required to operate these new systems. Here we are, again, back to the ever-important human element at the center of a technology endeavor.

Redefining Supply Chain Personas

Rather than taking the same, lose-lose historical approach to cracking tribal knowledge, leading organizations are pivoting workers away from the manual, unsafe, and repetitive. What they are doing differently, though, is concertedly moving subject matter experts toward higher-level orchestration and critical oversight. It won’t pan out with every worker, certainly, but it will ensure that the expertise is retained and applied to creating more strategic value. On the surface, that presents much more opportunity for a win-win scenario. Here is how some specific roles are evolving:

Estimator

Historically, manufacturing estimators spent most of their time immersed in manual, backward-looking work. They pored over static 2D PDFs, visually interpreted complex 3D CAD models, and stitched together cost assumptions from disconnected spreadsheets. Much of their value came from patience and pattern recognition rather than insight, and the process was slow, reactive, and highly dependent on individual experience. For leading companies that are aggressively implementing cost engineering processes, that is radically changing.

In the world of cost engineering, this role is now that of a strategic advisor. Leveraging AI to automate much of the data extraction that once consumed their time, this role develops models to identify cost drivers based on real manufacturing constraints and material behavior. As a result, this role now focuses more on guiding internal teams on design-for-manufacturability decisions and outlining strategic trade-offs that can include a mix of potential metrics, such as cost, lead time, and, increasingly, carbon impact.

Procurement

Procurement has primarily been about transactional efficiency and negotiation. Success was generally determined by price, often with significant visibility limitations into how the price was constructed. Framed within cost engineering, procurement is driven by collaboration and risk management. Using precise cost models, sourcing conversations begin with a clear understanding of cost, informed by specifics on materials, labor, processes, and capacity constraints. If a supplier’s quote exceeds cost expectations, conversations can then be had specifically about how to target specific constraints, such as inefficiencies in process or materials. The objective is to provide transparency that allows for a win-win relationship in terms of performance, profitability, and reliability.

Frontline

Despite the best of intentions to change the reactive nature of the role, frontline work has been dominated by manual execution and post-problem decision-making. Operators were tasked with keeping machines running, responding to breakdowns as they occurred, and relying heavily on tribal knowledge passed down informally and gained over time. Cost engineering shifts the dynamic for frontline workers. Upstream processes and systems provide precision that is communicated to these workers in terms of production expectations. Operators are tasked with supervising processes, identifying deviations, and capturing machine-level issues as they occur. As these workers become more connected and augmented via technology, faults and anomalies are logged digitally, with automated routing to maintenance or engineering as needed. With effective cost engineering, the frontline workforce ensures production aligns with cost and performance expectations.

Chief Supply Chain Officer (CSCO)

In the past, supply chain leadership was back-office oriented, using historical information to attempt to optimize logistics execution, inventory control, and cost. Their influence was significant but fairly tactical. That orientation shifts significantly with cost engineering as the CSCO becomes the central orchestrator of enterprise performance, based on the organization’s ability to align with market demand. Supply chain data increasingly impacts revenue and margin stability, based on market responsiveness. As a result, the CSCO sits at the intersection of strategy, technology, and execution, with an increased mandate that expands beyond moving goods to shaping how the organization makes decisions. In an organization using cost engineering, CSCOs are redesigning roles, workflows, and governance models, based on AI-driven insights that orchestrate decision-making across the enterprise and ecosystem.

Aversion to Change: You Can’t Take the Human Out of, Well, the Human

So, implementing cost engineering seems like an obvious win. Despite the obvious operational benefits, integrating cost engineering introduces complex modernization challenges. Of course, these challenges are mostly rooted in aversion to change. It’s a pretty understandable problem, with generations of workers having been trained on historically based methods and having spent entire careers honing a requisite expertise. To them, AI and automated decision-making are met with deep suspicion, rightfully grounded in the fear that technology will replace jobs and render their expertise irrelevant. They are not wrong. This challenge has been exacerbated by leadership deploying complex new software without context. In reaction to these poorly orchestrated, technology-centric changes, operators bypass the systems and revert to familiar methods and tools, neutralizing investment and anticipated benefits. Pilot purgatory, anyone?

To counter this within the organization, leadership must employ empathy, transparency of intent, continuous learning, and AI explainability that enables humans to trust machines and the logic behind their decisions. From an external perspective, organizations also need to understand that they are only as strong as their weakest supplier. Leading companies gain their status by subsidizing the digital and cybersecurity capabilities of their ecosystem. It becomes a case of a rising tide lifting all boats.

Return of Value

Deploying cost engineering cannot be about eliminating the human workforce through automation. It relies on a human-on-the-loop model, but it defers to technology to manage massive data complexity. The role of expert workers is to apply contextual judgment and engage in continual collaboration. The transition to this approach requires transparency and significant digital upskilling that will likely feel uncomfortable initially. Due to the step change required in this shift, organizations need to define and align with a return of value rather than shorter-term return on investment. By empowering the workforce and supply chain ecosystem to employ data-driven precision, the organization transitions from a guesswork culture to one of definable competitive differentiation.

In blog three of this series, I’ll explore the process component of the equation. I’ll focus on departmental silos, cross-functional teams, and supply chain orchestration. You can read the first blog in this four-part series here.

The post Modern Cost Engineering Evolution: Rewiring the Human Element for Supply Chain Resilience appeared first on Logistics Viewpoints.

Supply Chain AI Enters the Execution Era

Nuclear Power Is Becoming Part of the AI Infrastructure Supply Chain

Modern Cost Engineering Evolution: Rewiring the Human Element for Supply Chain Resilience

Walmart and the New Supply Chain Reality: AI, Automation, and Resilience

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

13 Books Logistics And Supply Chain Experts Need To Read

Trending

-

Non classé1 an ago

Non classé1 an agoWalmart and the New Supply Chain Reality: AI, Automation, and Resilience

- Non classé7 mois ago

Ex-Asia ocean rates climb on GRIs, despite slowing demand – October 22, 2025 Update

- Non classé9 mois ago

13 Books Logistics And Supply Chain Experts Need To Read

- Non classé3 mois ago

Container Shipping Overcapacity & Rate Outlook 2026

- Non classé3 mois ago

Ocean rates ease as LNY begins; US port call fees again? – February 17, 2026 Update

- Non classé6 mois ago

Ocean rates climb – for now – on GRIs despite demand slump; Red Sea return coming soon? – November 11, 2025 Update

- Non classé1 an ago

Unlocking Digital Efficiency in Logistics – Data Standards and Integration

-

Non classé1 an ago

Non classé1 an agoAmazon and the Shift to AI-Driven Supply Chain Planning